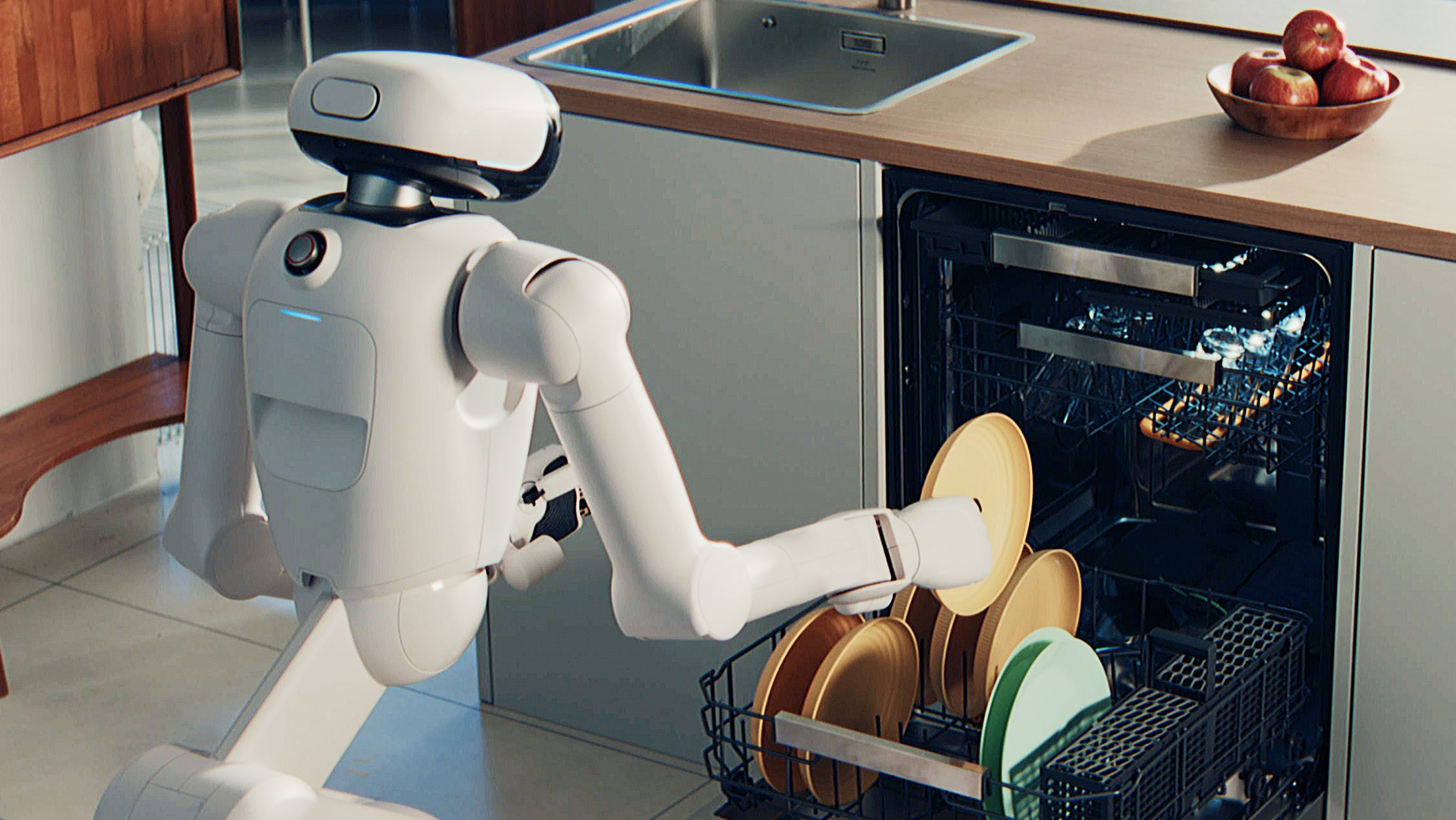

LG has unveiled its humanoid robot that can handle household chores. After teasing the CLOiD last week, the company has offered its first look at the AI-powered robot it claims can fold laundry, unload the dishwasher, serve food and help out with other tasks.

The CLOiD has a surprisingly cute "head unit" that's equipped with a display, speakers, cameras and other sensors. "Collectively, these elements allow the robot to communicate with humans through spoken language and 'facial expressions,' learn the living environments and lifestyle patterns of its users and control connected home appliances based on its learnings," LG says in its press release.

The robot also has two robotic arms — complete with shoulder, elbow and wrist joints — and hands with fingers that can move independently. The company didn't share images of the CLOiD's base, but it uses wheels and technology similar to what the appliance maker has used for robot vacuums. The company notes that its arms are able to pick up objects that are "knee level" and higher, so it won't be able to pick up things from the floor.

LG says it will show off the robot completing common chores in a variety of scenarios, like starting laundry cycles and folding freshly washed clothes. The company also shared images of it taking a croissant out of the oven, unloading plates from a dishwasher and serving a plate of food. Another image shows it standing alongside a woman in the middle of a home workout, though it's not clear how the CLOiD is aiding with that task.

We'll get a closer look at the CLOiD and its laundry-folding abilities once the CES show floor opens later this week, so we should get a better idea of just how capable it is. It sounds like for now LG intends this to be more of a concept rather than a product it plans to actually sell. The company says that it will "continue developing home robots with practical functions and forms for housework" and also bring its robotics technology to more of its home appliances, like refrigerators with doors that can automatically open.