Arcade machines once thrived as cultural objects as much as entertainment devices, combining bold industrial design and tactile controls to pull people into endless play. Over time, those cabinets became symbols of fixed experiences, each game defined by predictable patterns and tactically programmed outcomes. Conway’s Arcade revisits that familiar physical form but challenges the very idea of what an arcade game is supposed to be. This is done using computation, not as hidden infrastructure but as the driving force behind play itself.

Created by technology agency SpecialGuestX for Google, Conway’s Arcade is a generative gaming installation that transforms classic arcade logic into an evolving, rule-based system. Unveiled at the NeurIPS 2025 conference, the project was designed to communicate complex computational ideas through direct interaction, replacing static gameplay with experiences that emerge in real time.

Designer: SpecialGuestX

Instead of loading pre-existing games, the system generates new gameplay variations inspired by well-known titles such as Space Invaders, Breakout, Flappy Bird, and the Chrome Dino game. The smart system recomposes the game’s mechanics through adaptive logic. The conceptual backbone of Conway’s Arcade is John Conway’s Game of Life, a mathematical model where simple rules governing cells lead to unexpectedly complex patterns.

SpecialGuestX translated this principle into a playable framework where movement, collision, and behavior are determined dynamically rather than scripted in advance. Player input influences how these rules evolve, meaning each session becomes a unique computational outcome rather than a repeatable level sequence. Familiar visual language and controls anchor the experience, while the underlying logic continually reshapes how the game behaves.

This generative approach is powered by adaptive systems that respond to interactions in real time, making the arcade gaming feel intuitive while remaining unpredictable. Players begin to sense patterns and relationships as they play, learning the logic through experimentation rather than instruction. The result is an experience that rewards curiosity, turning gameplay into a form of exploration rather than mastery over fixed mechanics.

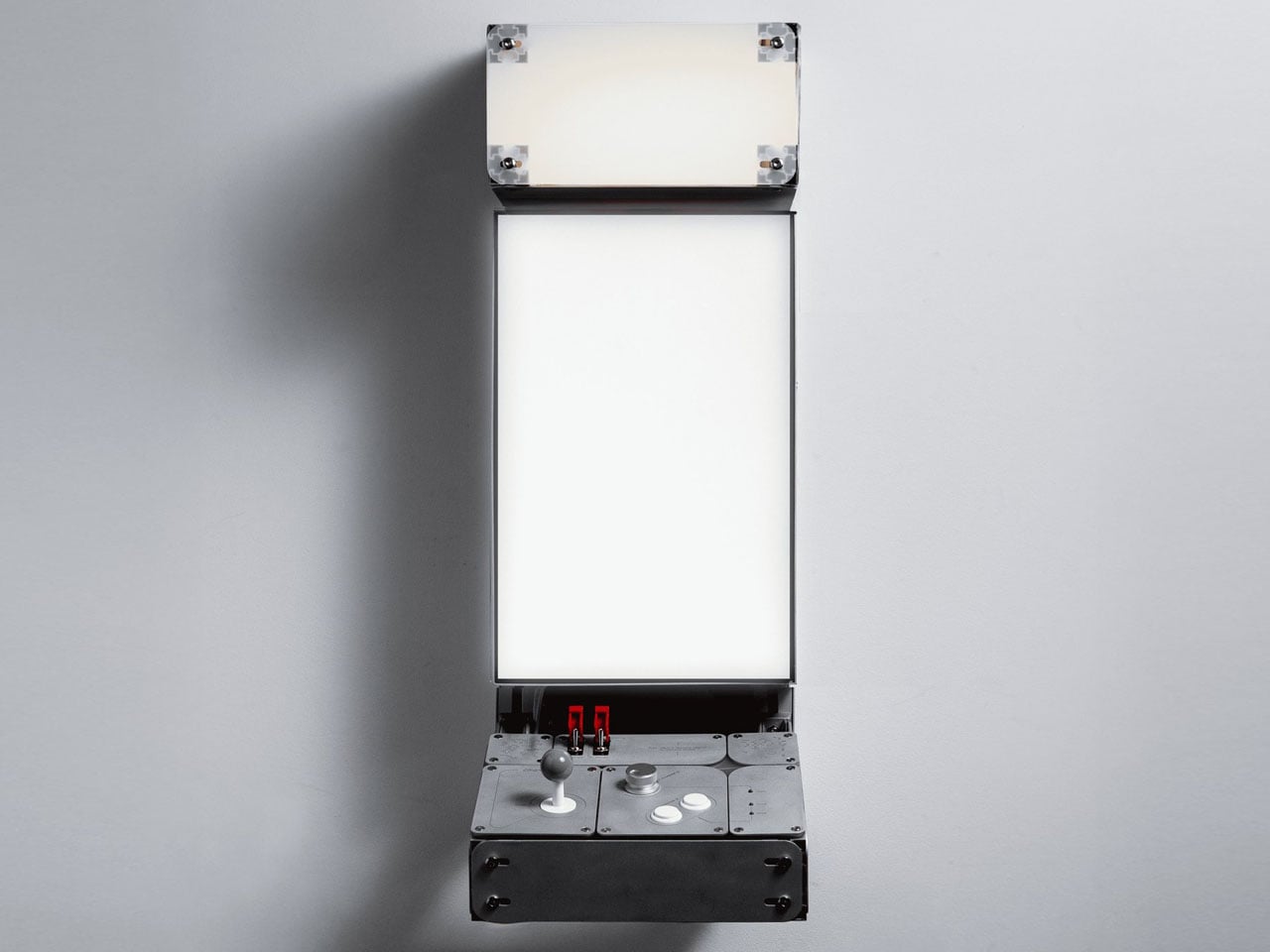

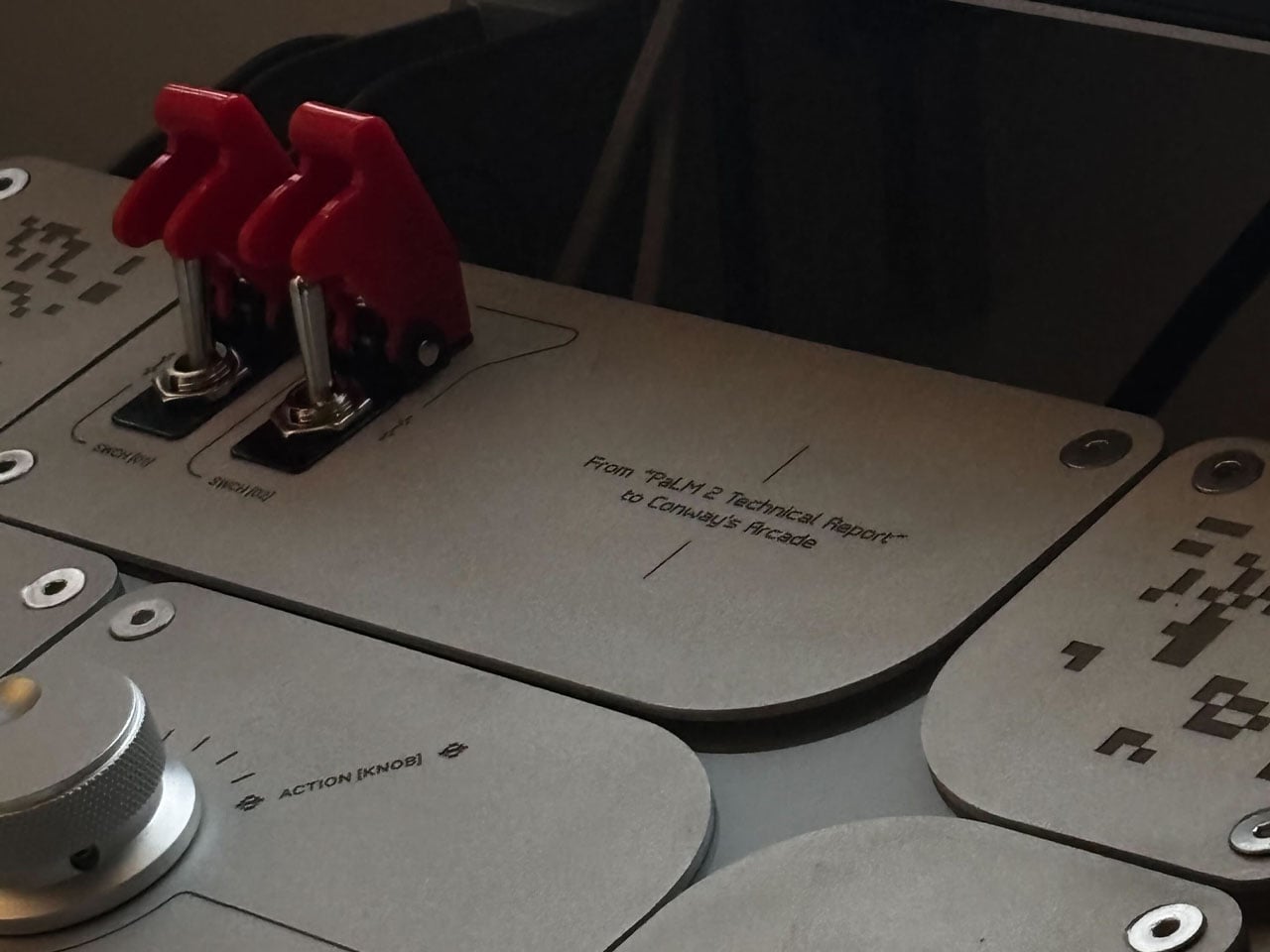

The physical design of Conway’s Arcade reinforces this philosophy. The cabinet is constructed entirely from aluminum and designed as a lightweight, modular structure that can be assembled by a single person in under an hour. Fabricated by Barcelona-based workshop 6punyales, the hardware balances durability with portability, making it suitable for exhibitions and travel. Mechanical joysticks, tactile buttons, and red latched switches reference classic arcade interfaces, while clean lines, exposed structure, and a custom typeface give the machine a distinctly contemporary presence.

Visuals follow a restrained 8-bit aesthetic, not as nostalgia for its own sake but as a clear, readable interface for generative behavior. On screen, game elements act like independent agents within a system, making the effects of rule changes visible and understandable. Rather than hiding computation behind spectacle, Conway’s Arcade puts logic on display, using play as the medium for comprehension.

Commissioned by Google and presented to an audience deeply familiar with artificial intelligence and machine learning, Conway’s Arcade succeeds by making abstract ideas accessible. It reframes the arcade cabinet as a tool for communication, showing how simple rules can generate complexity, creativity, and the element of surprise.

.gif)

The post AI-powered Conway’s Arcade not only plays classic games, it invents them in real-time first appeared on Yanko Design.