For long, AI tools have just been limited to graphical work, with not so much of it spilling into tangible industrial design. Sure, you could create logos and artwork for your products, but you still can’t “create” products using AI – something that xTool is hoping to change with its new software, AIMake. Simply put, AIMake is a GenAI tool that’s optimized for working with graphical output, but in a manner that’s fine-tuned for industrial design. With a prompt-based interface, AIMake allows you to create artwork that’s ready for 2D printing, laser-etching/engraving, and even embossing. AIMake offers as many as 70 different styles to apply to your prompt, generating everything from ready-to-print logos, to editable SVGs for screen-printing, and even low-relief 3D engraving. Alongside the AIMake feature, xTool’s also unveiling DesignFind – an asset marketplace for DIY enthusiasts and professional creators to access free and paid project files from users across the globe.

Generate Ready-To-Use Artwork for Laser Cutting and Screen Printing

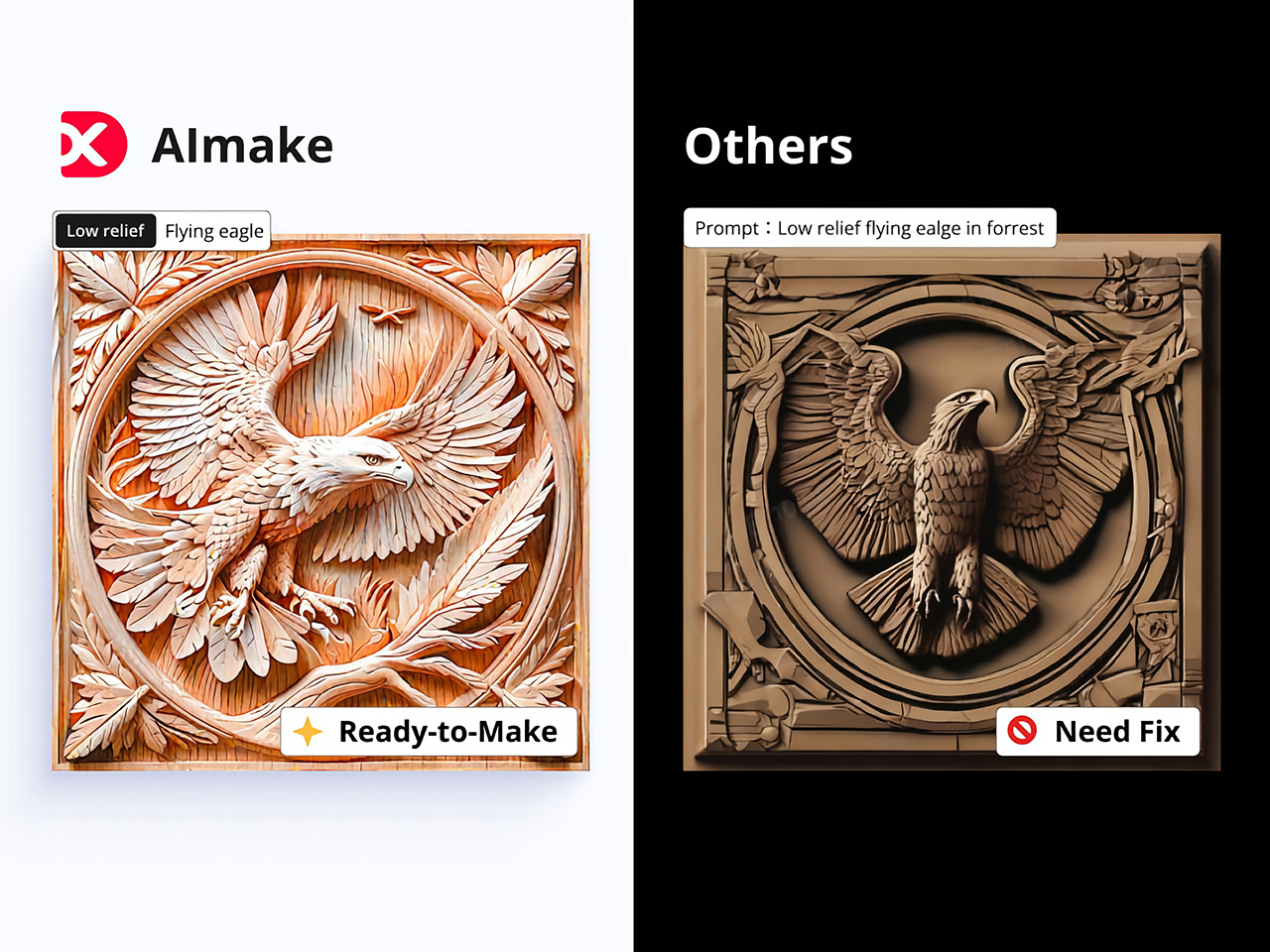

Traditionally, preparing files for laser engraving or screen printing can be complex and technical, involving vector graphic software, high-res images, or detailed edits to achieve the clean lines and solid contrast needed for these processes. AIMake addresses this by letting users skip these steps; with it, designers can input descriptive prompts, such as “geometric fox engraving with high contrast,” and watch as the AI turns this concept into a polished, ready-to-engrave file.

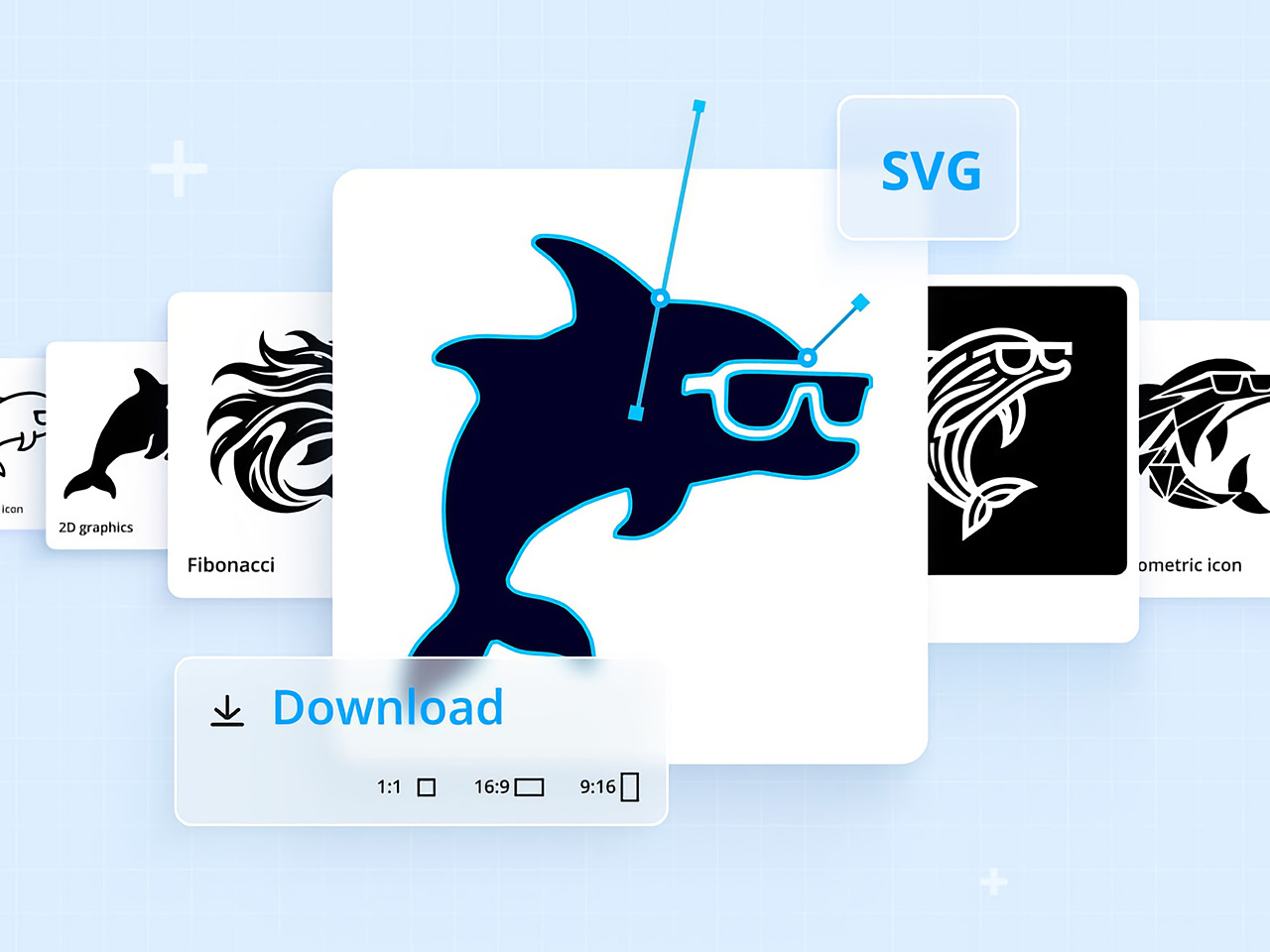

This direct-to-laser capability is a shift for designers, providing an AI tool that reduces reliance on graphic software or tedious vector adjustments. Instead, AIMake generates crisp, high-contrast designs that integrate seamlessly with xTool’s laser engravers, ensuring that the visual quality aligns with what’s needed for physical production. Whether creating art for metal, wood, or glass, AIMake optimizes the image for the specific demands of laser engraving, relief carving, and screen printing.

From Vision to Reality with Prompt-Based Design

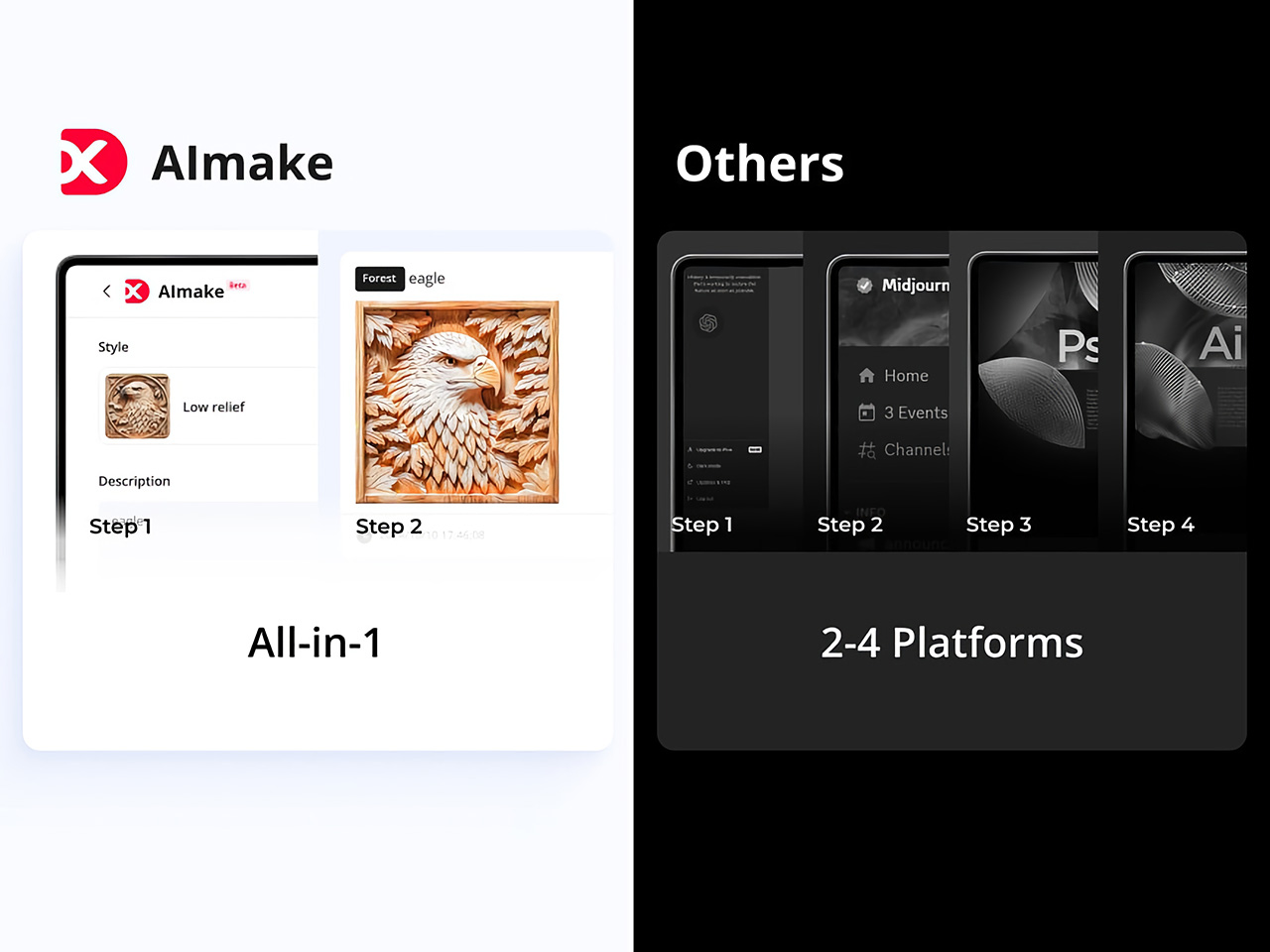

AIMake’s intuitive, prompt-based design system is key to its simplicity. Users enter a text description—anything from detailed portrait specs to broad aesthetic concepts—and the app’s AI engine generates a corresponding laser-compatible design. This means users can focus on creativity and concept rather than technical adjustments, using AIMake’s AI to refine the design automatically.

With this setup, designers of all backgrounds can produce intricate laser-cut images without needing advanced skills in vector graphics. For example, a prompt like “portrait of a lion, full mane, high detail, silhouette” can create a design with defined, laser-friendly lines and strong contrast, ready for immediate use in xTool’s compatible engravers. This system combines the efficiency of digital AI rendering with the tactile quality of handmade engraving, which helps streamline the creative workflow for designers and hobbyists alike.

Intuitive Controls make AI Creation easy for Beginners and Experts

xTool designed AIMake with both ease of use and versatility in mind, giving beginners and seasoned pros the tools they need for success. The platform allows new users to select from pre-set styles, such as vector templates or laser-friendly text effects, while offering advanced controls for adjusting details like line thickness and contrast.

Integration with xTool hardware, such as the D1 and M1 laser engravers, enables immediate testing and adjustments on physical materials, which saves time and minimizes material waste. For the designer seeking to perfect a project, these tools and settings remove the usual trial and error often required by other engraving software. This integration also lets users work on complex designs with the assurance that their final piece will match the original vision.

DesignFind: A Creative Hub for DIY and Professional Makers

DesignFind is a versatile platform for DIY enthusiasts and professional creators alike, offering a wide array of design assets for laser cutting, engraving, and more. With support for materials like wood and acrylic, users can explore diverse project categories, from home decor and jewelry to seasonal gifts for holidays and special occasions.

Key features include a library of free, shared templates—such as cutting boards and multi-layered designs—and premium assets like 3D models and detailed SVG files. Additionally, AIMake users can enhance their projects with DesignFind’s resources, making it easy to combine AI-generated designs with the platform’s extensive community assets. The “Featured Creators” section showcases work from top designers, inspiring new and seasoned creators with unique, ready-to-use projects.

Practical Applications Across Different Industries

AIMake’s functionality extends beyond personal design and has practical applications across various industries. Designers working with an array of fabrication devices (especially ones from xTool) can use AIMake to create logos, product designs, or custom merchandise. Small businesses can use it to create professional, engraving-ready designs without needing graphic design software, and crafters can generate unique pieces with ease.

In education, AIMake is a valuable asset, offering schools and makerspaces a chance to introduce students to digital design with hands-on projects. By using simple text inputs, students can quickly visualize and produce projects without needing prior experience in traditional graphic design tools. This flexibility gives learners and instructors alike more freedom to explore digital fabrication.

AIMake and the Future of Design Tools

AIMake sets a new standard for GenAI in tangible product design, showing how generative AI can seamlessly enhance the design process, making it more accessible, practical, and enjoyable. As AI technology continues to evolve, tools like AIMake represent a future where design creation is faster yet well within the control of creators.

For anyone working in creative design, AIMake offers an engaging blend of functionality and innovation, serving as both a creative assistant and a precision tool. Whether you’re producing intricate artwork, branding assets, or custom goods, AIMake’s versatile platform offers a little something for everyone. With AIMake, xTool is opening new pathways for artists and designers—one prompt at a time.

The post This AI Tool Directly Generates Laser Cutting and CNC Project Files: Meet DesignFind AIMake first appeared on Yanko Design.