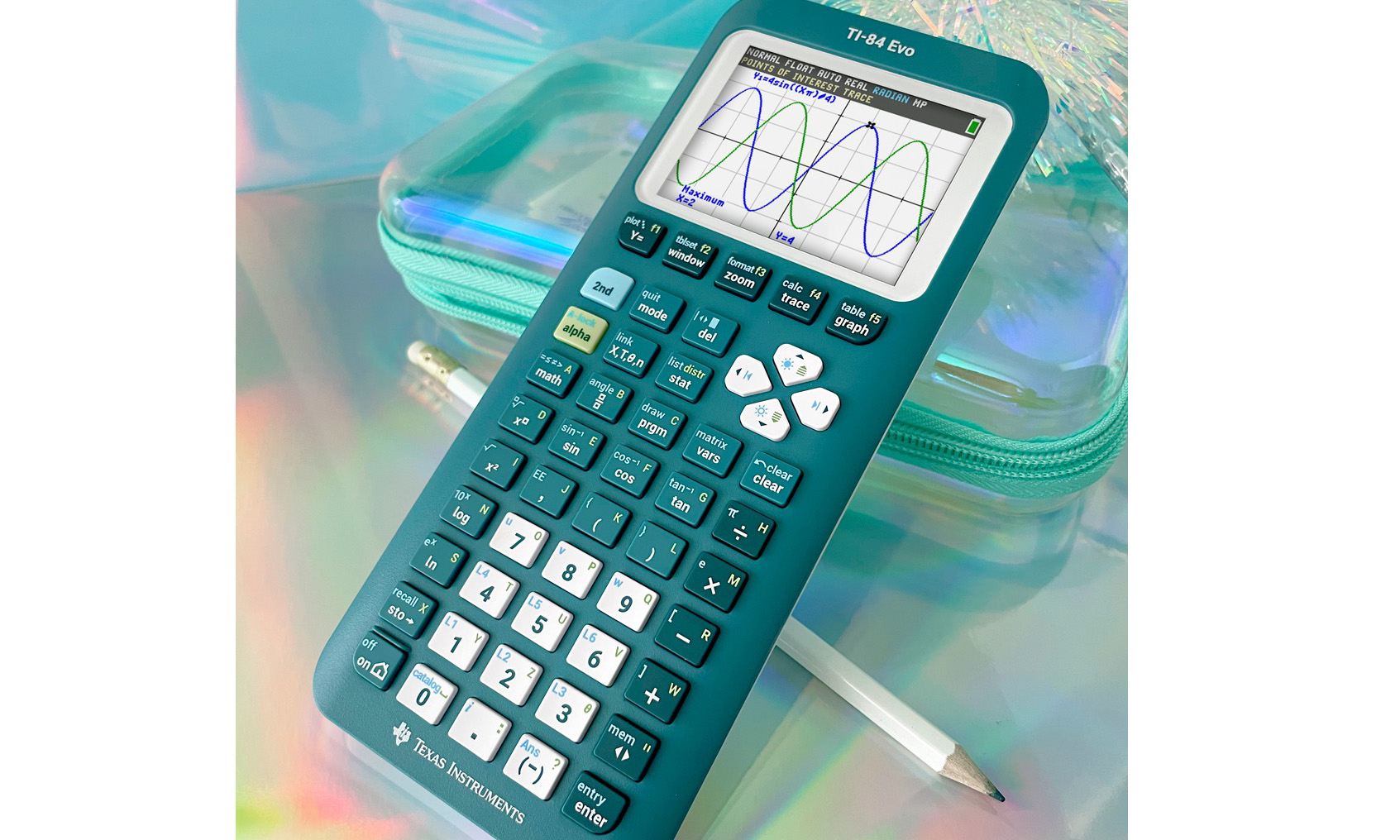

Texas Instruments graphing calculators have helped many a student with algebra, pre-calculus and upside-down anatomical slang. Now, the company is back with an upgrade for the modern world, the TI-84 Evo. The new device lets you get your math on with a faster processor, a new icon-based home screen and a redesigned keypad.

TI is marketing it as something akin to the Light Phone of calculators. Unlike calculator apps on phones or computers, the "distraction-free" TI-84 Evo is a single-purpose device "designed to do one thing exceptionally well — math." Without notifications, social media apps or even Wi-Fi, there's less to draw your focus away from the math problems at hand. (However, there will always be the sidesplittingly funny "58008" to relieve your boredom.)

The new model's processor is three times faster than its predecessor. It also adds 50 percent more graphing space, a simplified keypad and USB-C charging. There's also a new feature that lets you trace along a graph to find points of interest.

The TI-84 Evo is available now. Individual customers will pay $160. (School districts can contact the company for bulk pricing.) The calculator ships in a modern array of colors: white (the standard model), mint, pink, purple, teal, raspberry and silver.

This article originally appeared on Engadget at https://www.engadget.com/mobile/texas-instruments-made-a-new-flagship-graphing-calculator-the-ti-84-evo-201903438.html?src=rss