Mark Zuckerberg changed his company’s name to Meta in October 2021 because he believed the future was virtual. Not just sort-of virtual, like Instagram filters or Zoom calls, but capital-V Virtual: immersive 3D worlds where you’d work, socialize, and live a parallel digital life through a VR headset. Four years and roughly $70 billion in cumulative Reality Labs losses later, Meta is quietly dismantling that vision. In January 2026, the company laid off around 1,500 people from its metaverse division, shut down multiple VR game studios, killed its VR meeting app Workrooms, and effectively admitted that the grand bet on virtual reality had failed. Investors barely blinked. The stock went up.

The official line now is that Meta is pivoting to AI and wearables. Zuckerberg spent much of 2025 building what he calls a “superintelligence” lab, hiring top-tier AI talent with eye-watering compensation packages that are now one of the largest drivers of Meta’s 2026 expense growth. The company released Llama models that benchmark decently against OpenAI and Google, embedded chatbots into WhatsApp and Instagram, and talks constantly about “AI agents” and “new media formats.” But from a product and profit perspective, Meta’s AI strategy looks suspiciously like its metaverse strategy: lots of spending, vague promises, and no breakout consumer experience that people actually love. Meanwhile, the thing that is quietly working, the thing people are buying and using in the real world, is a pair of $300 smart glasses that Meta barely talks about. If this sounds like a pattern, that’s because it is. Meta has now misread the future twice in a row, and both times the answer was hiding in plain sight.

The Metaverse Was a $70 Billion Fantasy

Reality Labs has been hemorrhaging money since late 2020. As of early 2026, cumulative operating losses sit somewhere between $70 and $80 billion, depending on how you slice the quarters. In the third quarter of 2025 alone, Reality Labs posted a $4.4 billion loss on $470 million in revenue. For 2025 as a whole, the division lost more than $19 billion. These are not rounding errors or R&D investments that will pay off next year. These are structural losses tied to a product category, VR headsets and metaverse platforms, that the market simply does not want at the scale Meta imagined.

The vision sounded compelling in a keynote. You would strap on a Quest headset, meet your coworkers in a virtual conference room with floating whiteboards, then hop over to Horizon Worlds to hang out with friends as legless avatars. The problem was that almost no one wanted to do any of that for more than a demo. VR remained a niche gaming platform with occasional fitness and entertainment use cases, not the next paradigm shift in human interaction. Zuckerberg kept insisting the breakthrough was just around the corner. He was wrong, and the January 2026 layoffs and studio closures were the formal acknowledgment that Reality Labs as originally conceived was dead.

The irony is that Meta actually had a potential killer app inside Reality Labs, and it murdered it. Supernatural, a VR fitness game that Meta acquired for $400 million in 2023, was one of the few pieces of Quest software that generated genuine user loyalty and recurring revenue. People who used Supernatural regularly described it as the most effective home workout they had ever done, combining rhythm-based gameplay with full-body movement in a way that treadmills and Peloton bikes could not replicate. It had a subscription model, a dedicated community, and real retention. In January 2026, Meta moved Supernatural into “maintenance mode,” which is corporate speak for “we fired almost everyone and it will get no new content.” If you are trying to prove that VR has mainstream utility beyond gaming, fitness is one of the most obvious wedges. Meta had that wedge, and it chose to kill it in the same round of cuts that shuttered studios working on Batman VR games and other prestige titles. The message was clear: Zuckerberg had lost interest in Quest, even the parts that worked.

The AI Bet That Looks Like the ‘Metaverse Bust’ 2.0

After spending years insisting the future was virtual worlds, Meta pivoted hard to AI in 2023 and 2024. Zuckerberg now talks about AI the way he used to talk about the metaverse: with sweeping language about paradigm shifts and transformative platforms. The company stood up an AI division focused on building what it calls “superintelligence,” hired aggressively from OpenAI and Anthropic, and made technical talent compensation the second-largest contributor to Meta’s 2026 expense growth behind infrastructure. This is not a side project. Meta is spending billions on AI research, training, and deployment, and Zuckerberg expects losses to remain near 2025 levels in 2026 before they start to taper.

From a technical standpoint, Meta’s AI work is solid. The Llama family of models is legitimately competitive with GPT-4 class systems and has found real adoption among developers who want open-source alternatives to OpenAI and Google. Meta’s internal AI is also driving real business value in ad targeting, content ranking, and moderation. Those systems work, and they contribute directly to Meta’s core revenue. But from a consumer product perspective, Meta’s AI feels scattered and often unnecessary. The company has embedded “Meta AI” chatbots into WhatsApp, Instagram, Messenger, and Facebook, none of which feel like natural places for a chatbot. Instagram’s feed is increasingly stuffed with AI-generated images and engagement bait that users actively complain about. Meta has launched character-based AI bots tied to influencers and celebrities, and approximately no one uses them. The gap between “we have impressive models” and “we have a product people love” is enormous, and it is the exact same gap that sank the metaverse.

What Meta is missing, again, is product intuition. OpenAI built ChatGPT and made it feel like the future because the interface was simple, the use cases were obvious, and it delivered consistent value. Google integrated Gemini into Search and productivity tools where users were already working. Meta, by contrast, seems to be throwing AI at every surface it controls and hoping something sticks. Zuckerberg talks about “an explosion of new media formats” and “more interactive feeds,” which in practice means more algorithmic slop and fewer posts from people you actually know. Analysts are starting to notice. One Bernstein note from early 2026 argued that the “winner” criteria in AI is shifting from model quality to product usage, which is a polite way of saying that having a great model does not matter if your product is annoying. Meta has a great model. Its products are annoying.

The financial picture is also murkier than Meta would like to admit. Reality Labs is still losing close to $20 billion a year, and while AI is not a separate reporting segment, the talent and infrastructure costs are clearly rising. Meta’s overall revenue growth is strong, driven by advertising, but the company is not yet showing a clear path to AI profitability outside of ‘ad optimization’. That puts Meta in the awkward position of having pivoted from one unprofitable moonshot (metaverse) to another potentially unprofitable moonshot (consumer AI products) while the actual profitable parts of the business, social ads and engagement, keep the lights on. This is a pattern, and it is not a good one.

The Smart Glasses Lead That Meta Is Poised to Lose

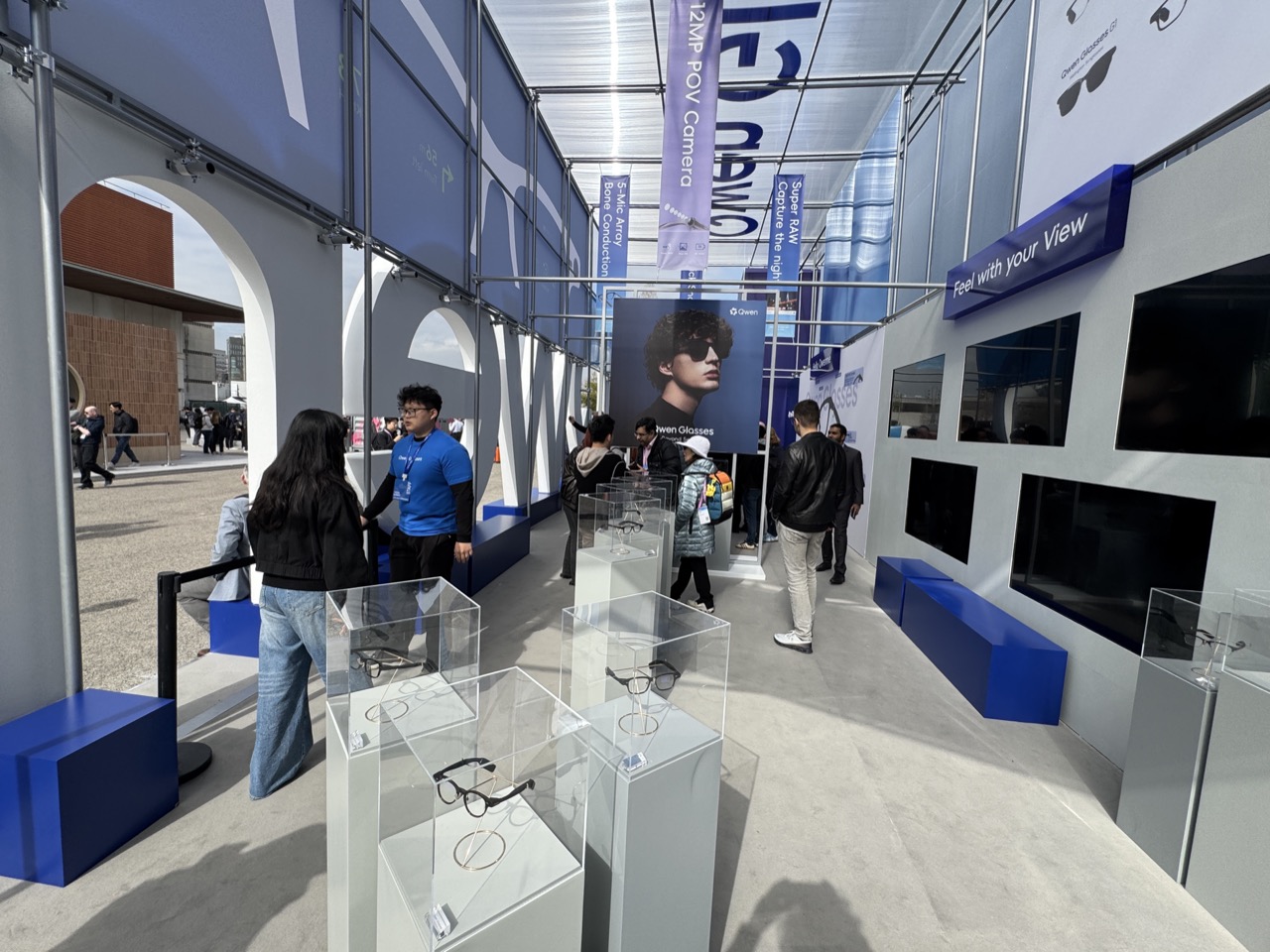

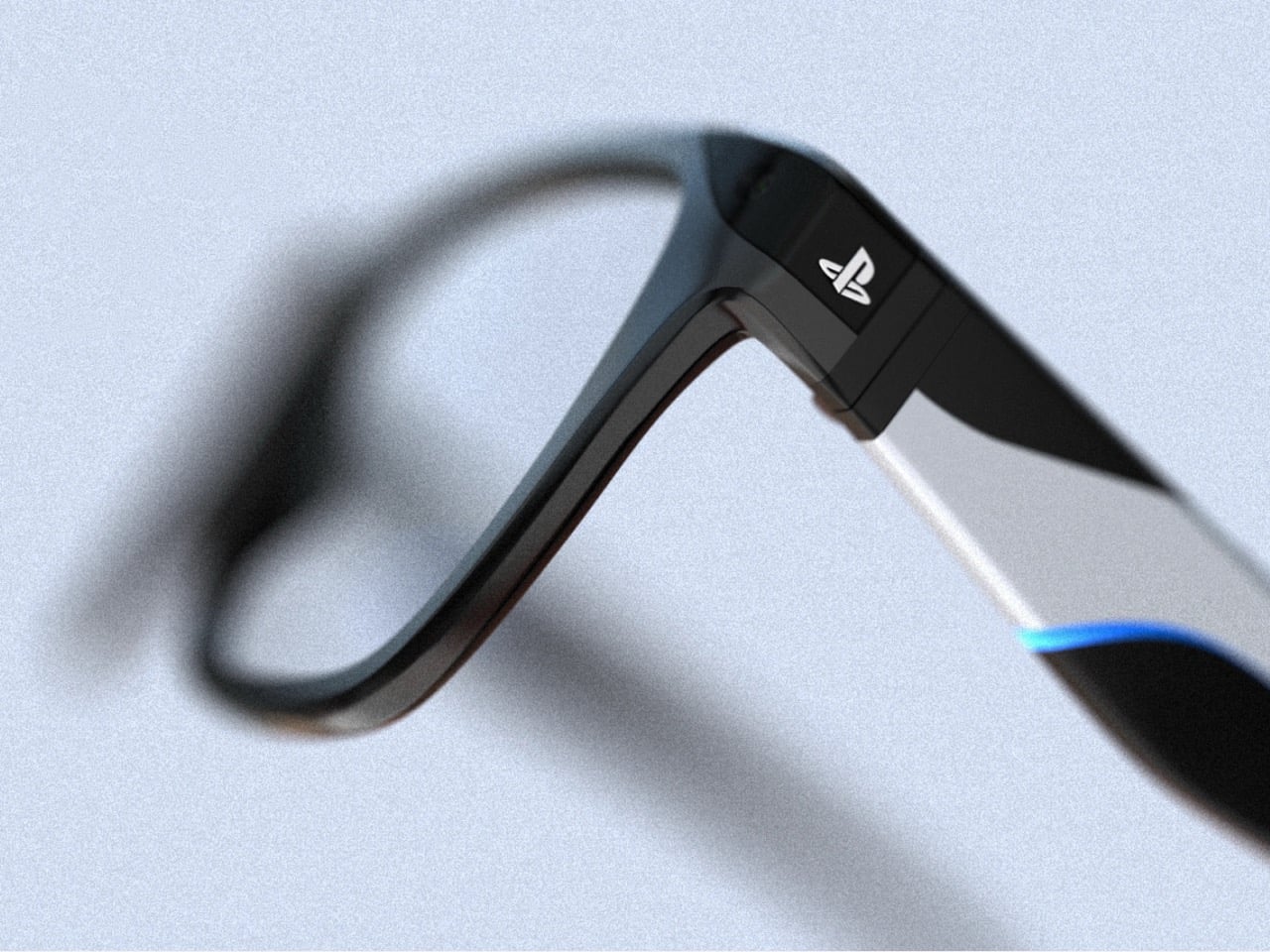

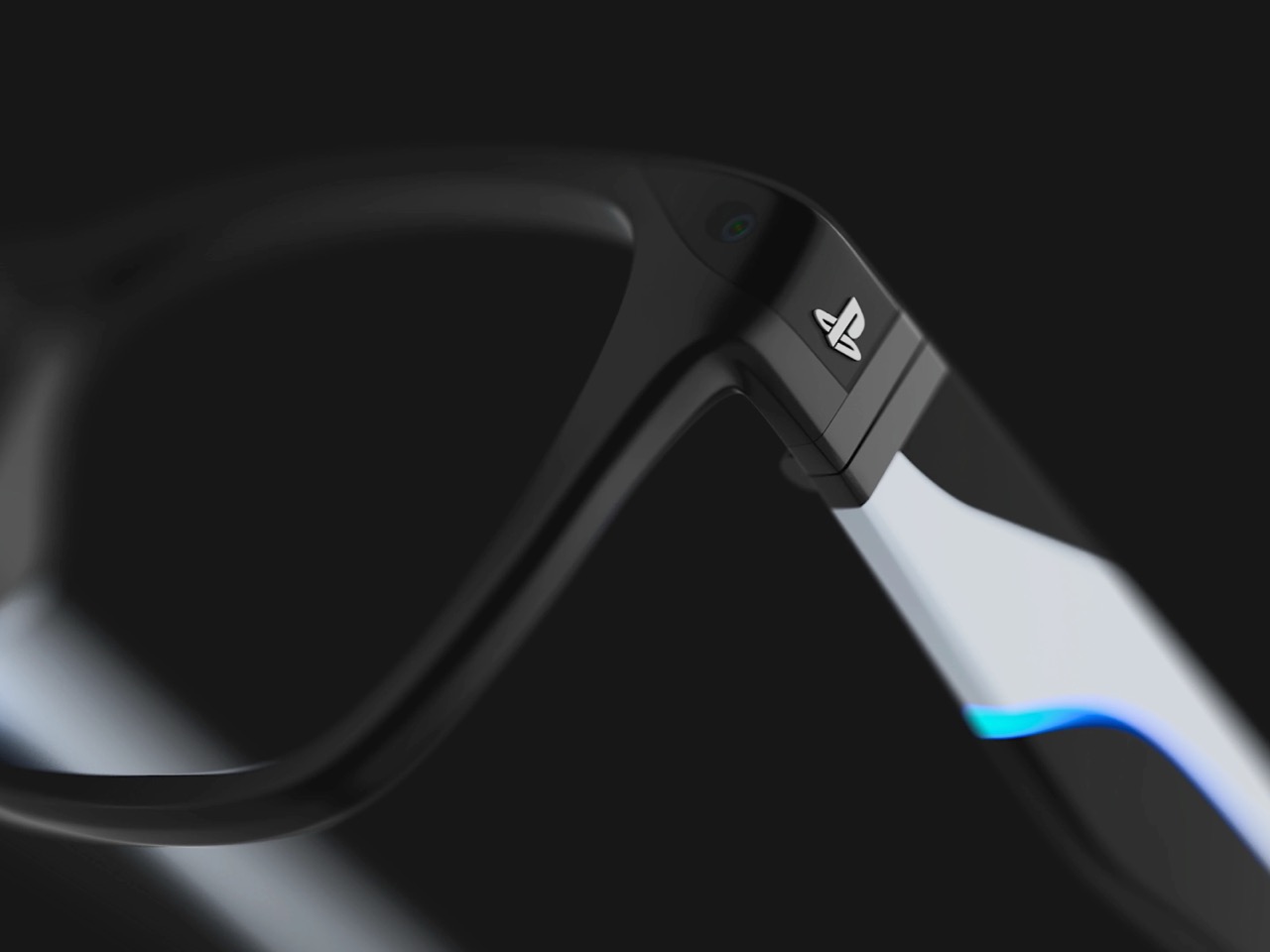

Meta talks about the Ray-Ban smart glasses constantly. Zuckerberg calls them the “ultimate incarnation” of the company’s AI vision, and the pitch is relentless: sales more than tripled in 2025, the glasses represent the future of ambient computing, this is the post-smartphone platform. The problem is not that Meta is ignoring the glasses. The problem is that Meta is about to squander a massive early lead, and the competition is closing in fast. 2026 is shaping up to be a blockbuster year for smart glasses. Samsung confirmed its AR glasses are launching this year. Google is releasing its first pair of smart glasses since 2013, an audio-only pair similar to the Ray-Ban Meta glasses. Apple is reportedly pursuing its own smart glasses and shelved plans for a cheaper Vision Pro to prioritize the project. Meta dominated VR because it was early, cheap, and had no real competition. In smart glasses, that window is closing fast, and the field is getting crowded with all kinds of names, from smaller players like Looktech and Xgimi’s Memomind to mid-sized brands like Xreal, to even larger ones like Google, TCL, and Xiaomi.

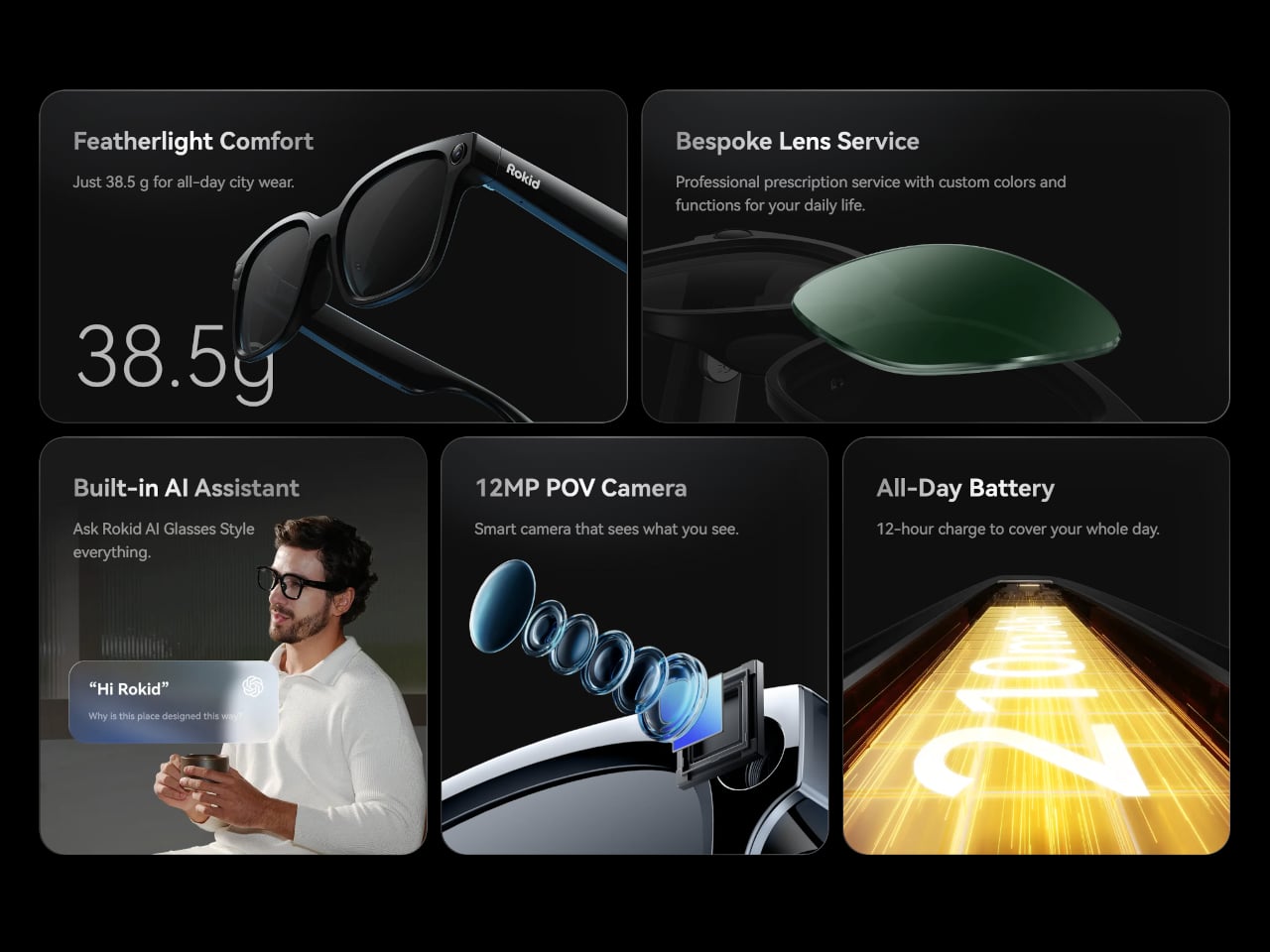

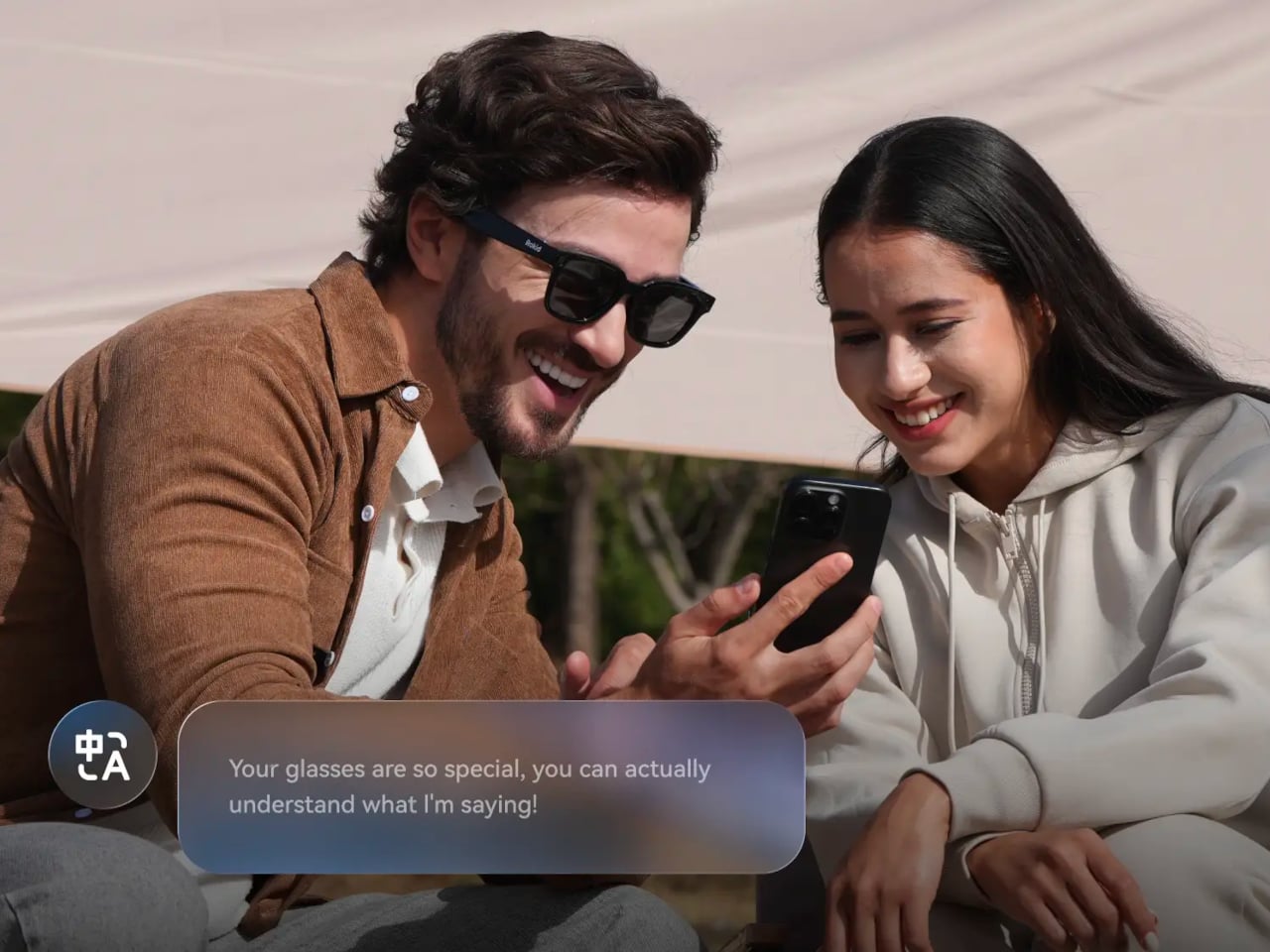

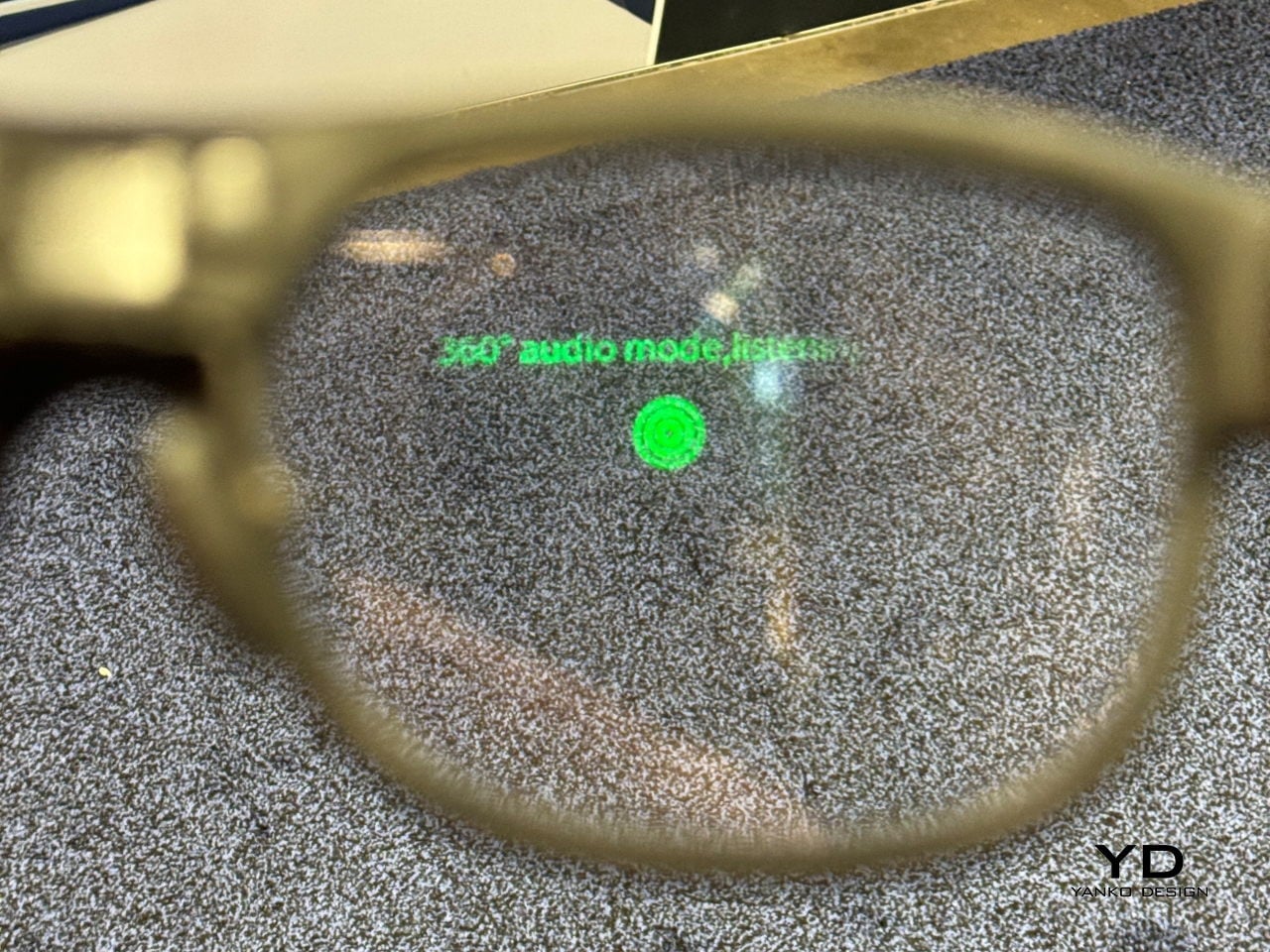

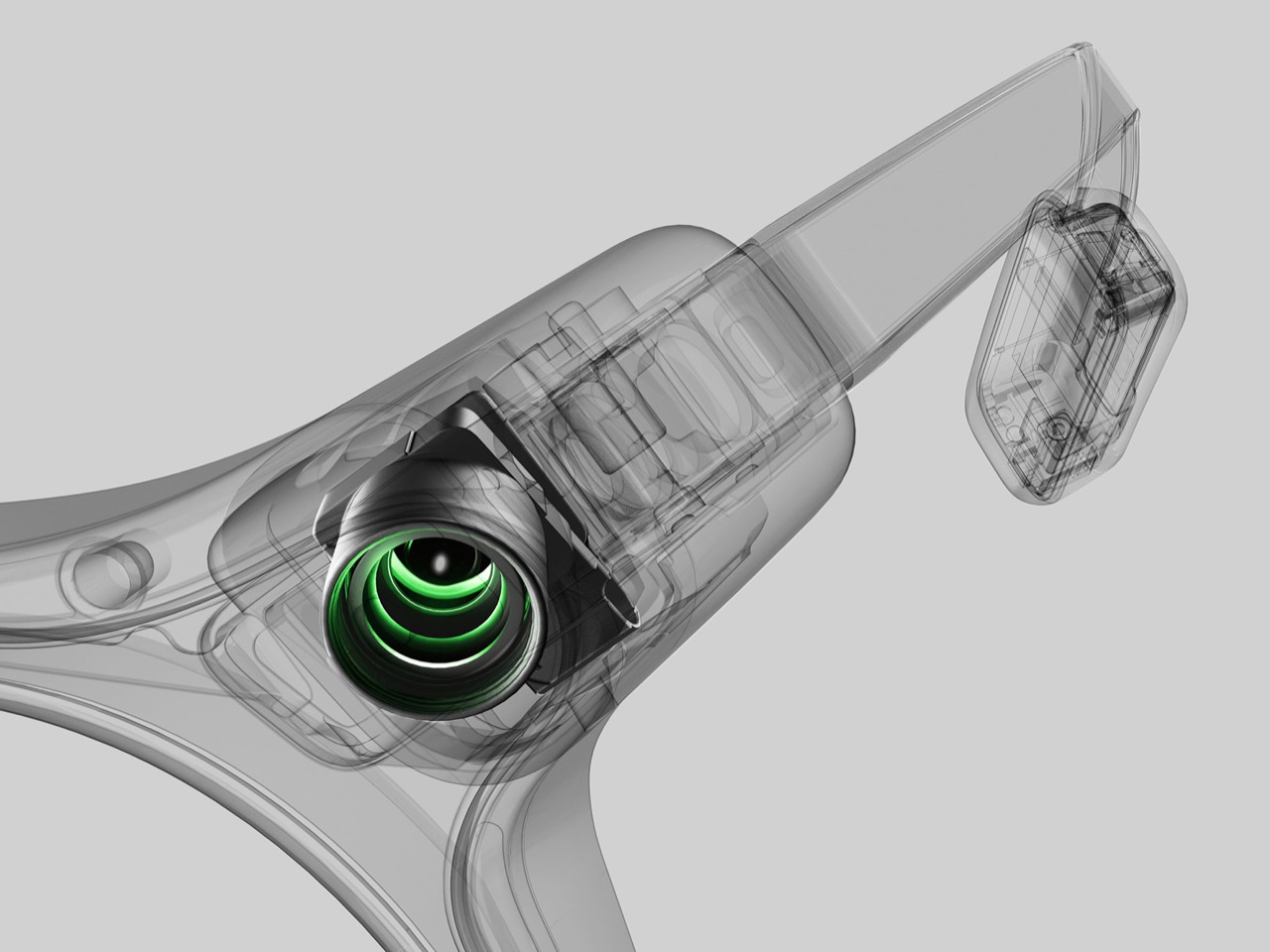

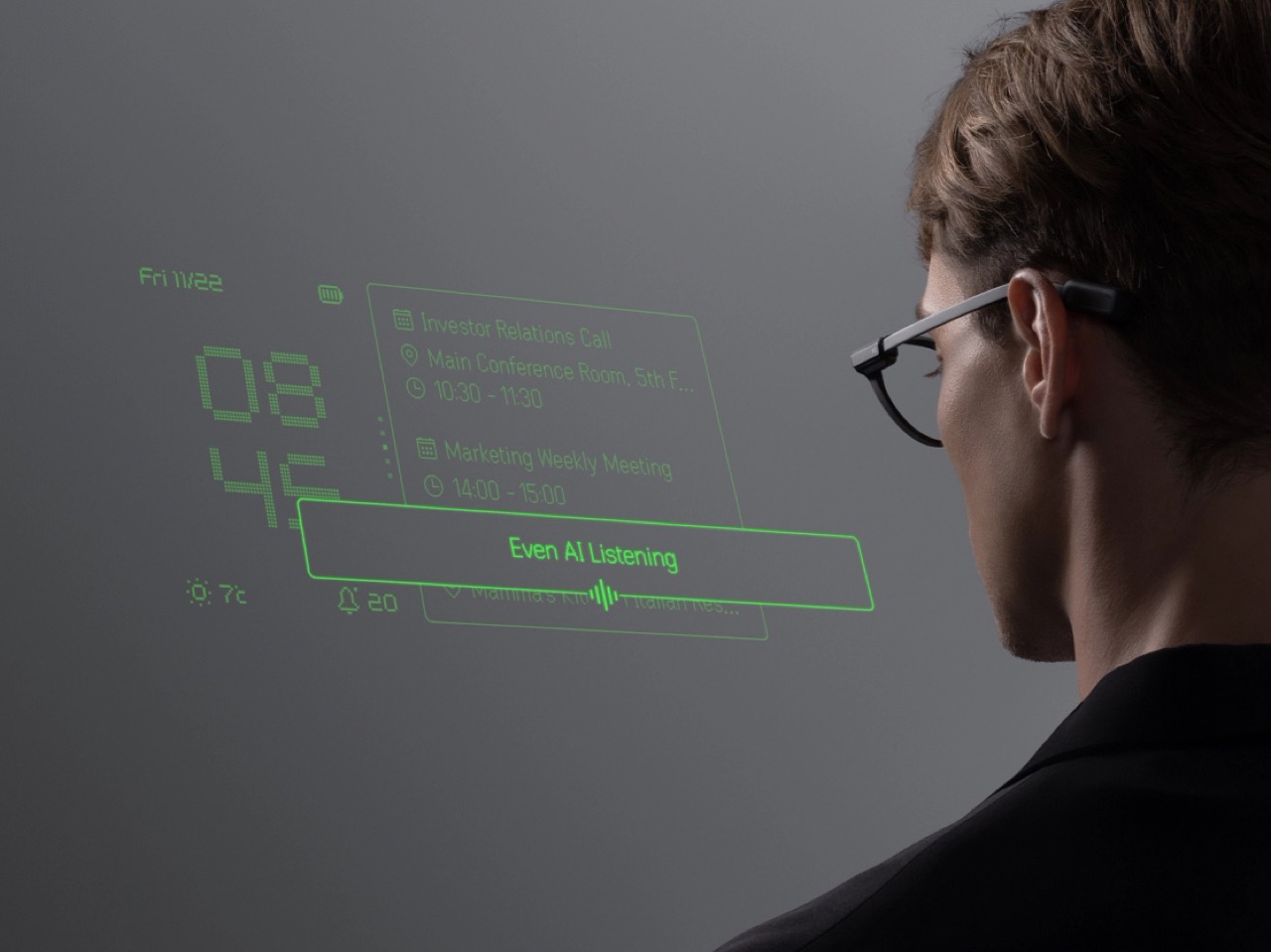

The Ray-Ban Meta glasses work because they are simple and focused. They take photos and videos, play music, make calls, and provide real-time answers through an AI assistant. Parents use them to record their kids hands-free. Travelers use them for translation. The form factor, actual Ray-Ban Wayfarers that cost around $300, means they do not scream “I am wearing a computer on my face.” This is the rare Meta hardware product that feels intuitive rather than forced, and it is selling because it solves boring, everyday problems without requiring users to change their behavior.

Then Meta made a critical mistake. To use the glasses, you have to route everything through the Meta AI app, which means you cannot just power-use the hardware without engaging with Meta’s AI-slop ecosystem. Want to access your photos? Meta AI. Want to tweak settings? Meta AI. The app is the mandatory gateway, and it is stuffed with the same kind of algorithmic recommendations and AI-generated suggestions that clutter Instagram and Facebook. Instead of letting the glasses be a clean, utilitarian tool, Meta is using them as another vector to push its AI products. Google and Samsung are not going to make that mistake. Their glasses will integrate with Android XR and existing ecosystems without forcing users into a single AI app. Apple, if and when it launches, will almost certainly take a similar approach: clean hardware, seamless OS integration, optional AI features. Meta had a head start, Ray-Ban branding, and a product people actually liked. It is on track to waste all of that by prioritizing AI evangelism over product discipline, and the competition is going to eat its lunch.

What Happens When You Chase Narratives Instead of Products

The pattern across metaverse and AI is that Meta keeps betting on big, abstract visions rather than iterating on the things that work. Zuckerberg is a narrative-driven founder. He wants to define the future, not respond to it. That impulse gave us Facebook in 2004, when no one else saw the potential of real-identity social networks, but it has led Meta astray repeatedly in the 2020s. The metaverse was a narrative, not a product. The idea that billions of people would strap on headsets to work and socialize in 3D was always more science fiction than product roadmap, but Zuckerberg committed so hard to it that he renamed the company.

AI feels like the same mistake. The narrative is that foundation models and “agents” will transform every part of computing, and Meta wants to be seen as a leader in that transformation. The actual products, chatbots in WhatsApp and AI-generated feed content, do not meaningfully improve the user experience and in many cases make it worse. Meanwhile, the thing that is working, smart glasses, does not fit cleanly into the AI or metaverse narrative, so it gets less attention and investment than it deserves. Meta’s 2026 strategy, “shifting investment from metaverse to wearables,” is a tacit admission of this, but it is couched in language that still emphasizes AI rather than the hardware itself.

The other pattern is that Meta is willing to kill its own successes if they do not fit the broader narrative. The hit VR fitness game on Meta’s Horizon, Supernatural, was working. It had subscribers, retention, and cultural momentum within the VR fitness community. It was also a relatively small, specific product rather than a platform play, and that made it expendable when Meta decided to scale back Reality Labs. The same logic applies to Quest more broadly. The headset had carved out a niche in gaming and fitness, and with sustained investment in content and ecosystem development, it could have grown into a meaningful adjacent business. Instead, Meta is deprioritizing it because Zuckerberg has decided the future is AI and lightweight wearables. That might turn out to be correct, but the way Meta is executing the pivot, by shuttering studios and putting products in maintenance mode rather than spinning them out or finding partners, suggests a lack of product discipline.

Why Smart Glasses Might Actually Be the Next Facebook

If you step back and ask what Meta is actually good at, the answer is not virtual reality or language models. Meta is good at building social products with massive scale, capturing and distributing content, and monetizing attention through ads. The Ray-Ban Meta glasses fit all of those strengths. They make it easier to capture photos and video, which feeds into Instagram and Facebook. They use AI to provide contextual information, which ties into Meta’s model development. And they are a physical product that people wear in public, which is a form of distribution and branding that Meta has never had before.

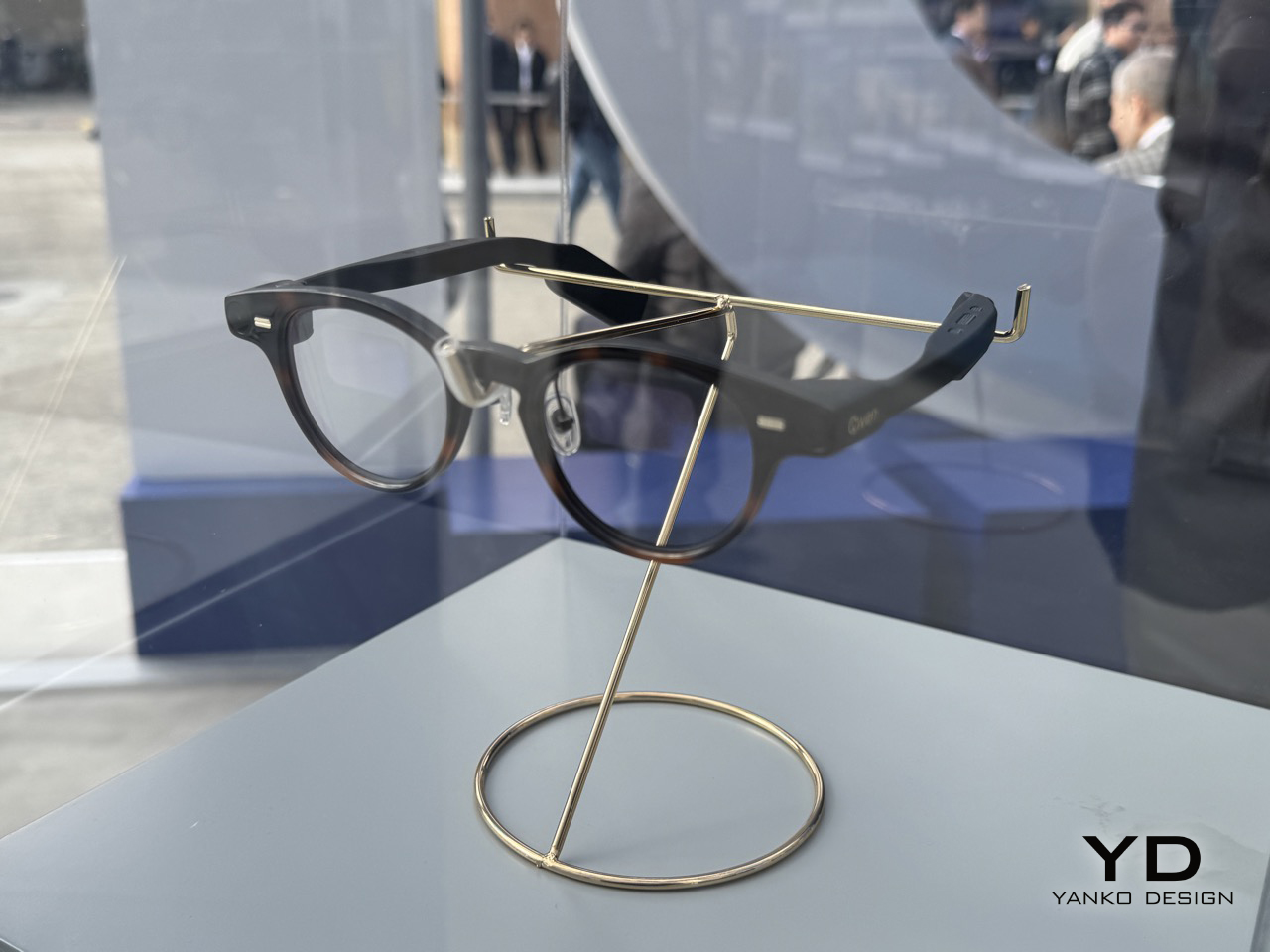

The bigger story is that smart glasses as a category are exploding, and Meta happened to be early. It is not just Samsung, Google, and Apple entering the space. Meta itself is expanding the Ray-Ban line with Displays (which adds a heads-up display) and partnering with Oakley on HSTN, a sportier model aimed at action sports. Google is teaming up with Warby Parker for its glasses, which gives it instant credibility in eyewear design. And then there are the startups: Even Realities, Xiaomi, Looktech, MemoMind, and dozens more, all slated for 2026 releases. This feels exactly like the moment AirPods sparked the true wireless earbud movement. Apple defined the format, then everyone from Samsung to Sony to no-name brands flooded the market, and now you can buy HMD ANC earbuds for 28 dollars. Smart glasses are following the same trajectory, which means the form factor itself is validated, and Meta’s early lead matters less than whether it can keep iterating faster than everyone else.

The other underrated piece is that having an instant camera on your face is genuinely useful in ways that VR headsets never were. People are using Ray-Ban Meta glasses as GoPro alternatives while skateboarding, cycling, and doing action sports, because POV capture without holding a phone or mounting a camera is frictionless. Content creators are using them to shoot hands-free B-roll at events like CES. Parents are using them to record their kids playing without the weird “I am holding my phone up at the playground” vibe. Pet owners are capturing spontaneous moments with dogs and cats that would be impossible to get with a phone. These are not sci-fi use cases or metaverse fantasies. They are boring, real-world problems that the glasses solve immediately, and that is why they are selling. Meta has spent a decade chasing grand visions of the future, and it accidentally built a product that people want right now. The challenge is whether it can resist the urge to over-complicate it before Google, Samsung, and Apple catch up.

The Real Lesson Is About Focus

Meta has spent the last five years oscillating between grand visions, metaverse and AI, and neglecting the products that actually work. The Ray-Ban Meta glasses are proof that when Meta focuses on solving real problems with tangible products, it can still build things people want. The metaverse failed because it was a solution in search of a problem, and the AI push is struggling because Meta is shipping features rather than products. Smart glasses, by contrast, are succeeding because they make everyday tasks easier without requiring users to change their behavior or buy into a futuristic narrative.

If Zuckerberg can internalize that lesson, Meta might actually have a shot at owning the next platform. But that requires a level of product discipline and restraint that Meta has not shown in years. It means resisting the urge to turn every product into a platform, admitting when a bet has failed rather than pouring another $10 billion into it, and focusing on iteration over narration. The irony is that Meta already has the right product. It just needs to stop looking past it.

The post Meta Misread the Future Twice. Now They’re Sitting on a Golden Egg, But Don’t Know It first appeared on Yanko Design.