If not Palantir, why Palantir-shaped??

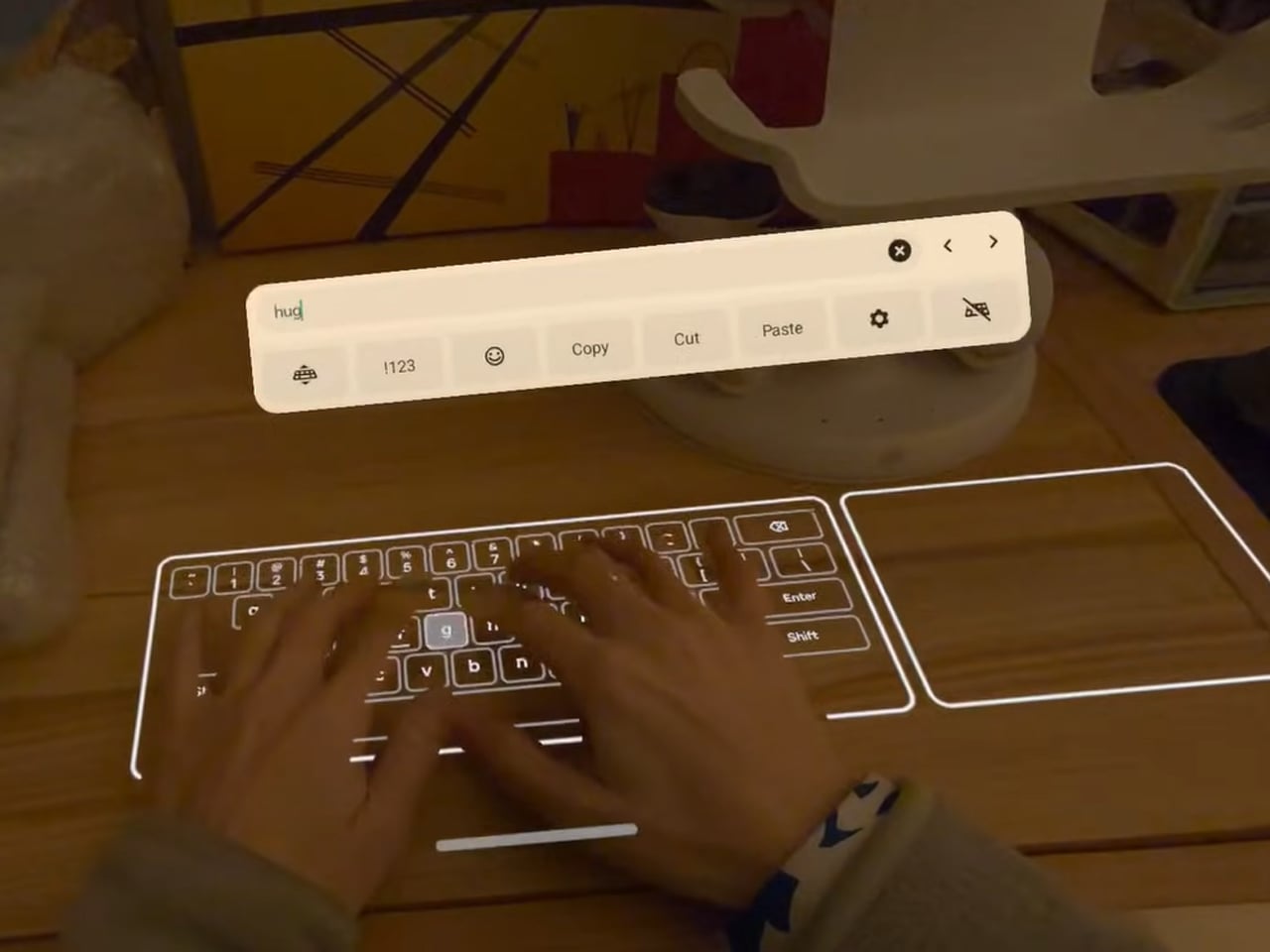

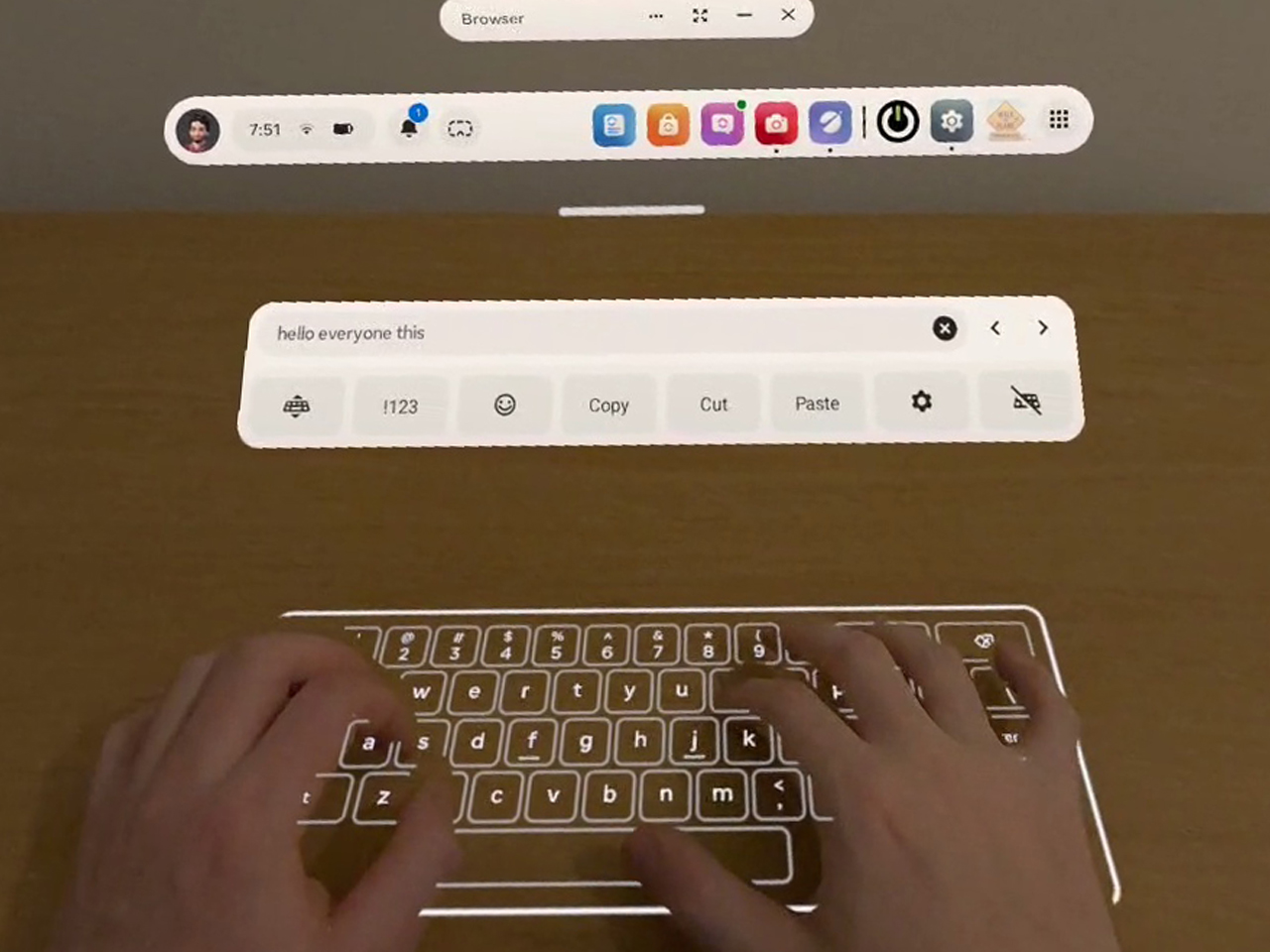

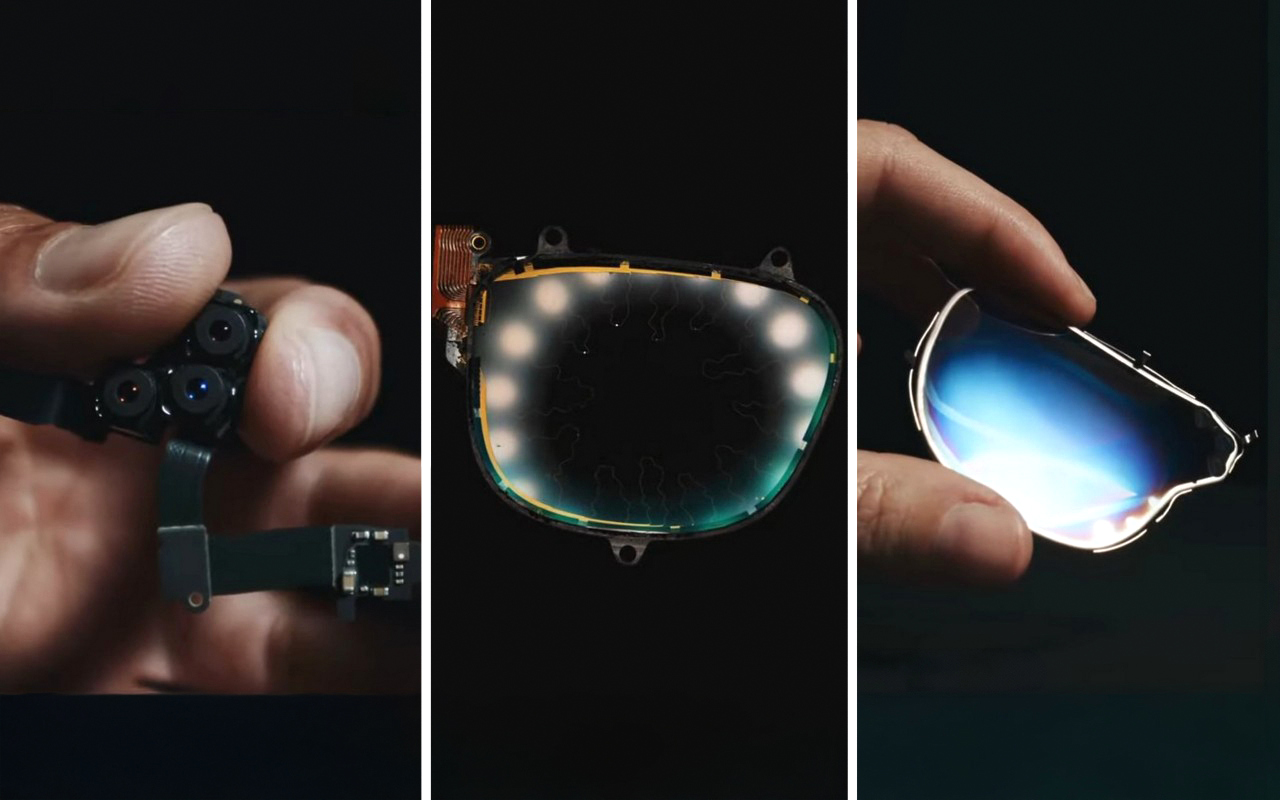

Palantir builds spy tech for the CIA, DHS, and ICE. It aggregates data, maps your life, and tells governments who to watch. Meta is building something with the same bones. It’s called Name Tag, a facial recognition feature coming to Ray-Ban smart glasses that lets a wearer look at a stranger in public and have an AI identify them in real time, pulling their name and profile directly from Facebook and Instagram. The surveillance hardware is a $300 fashion accessory, the database was built by 3 billion people tagging photos for free, and the targets are anyone, anywhere, who never agreed to any of it.

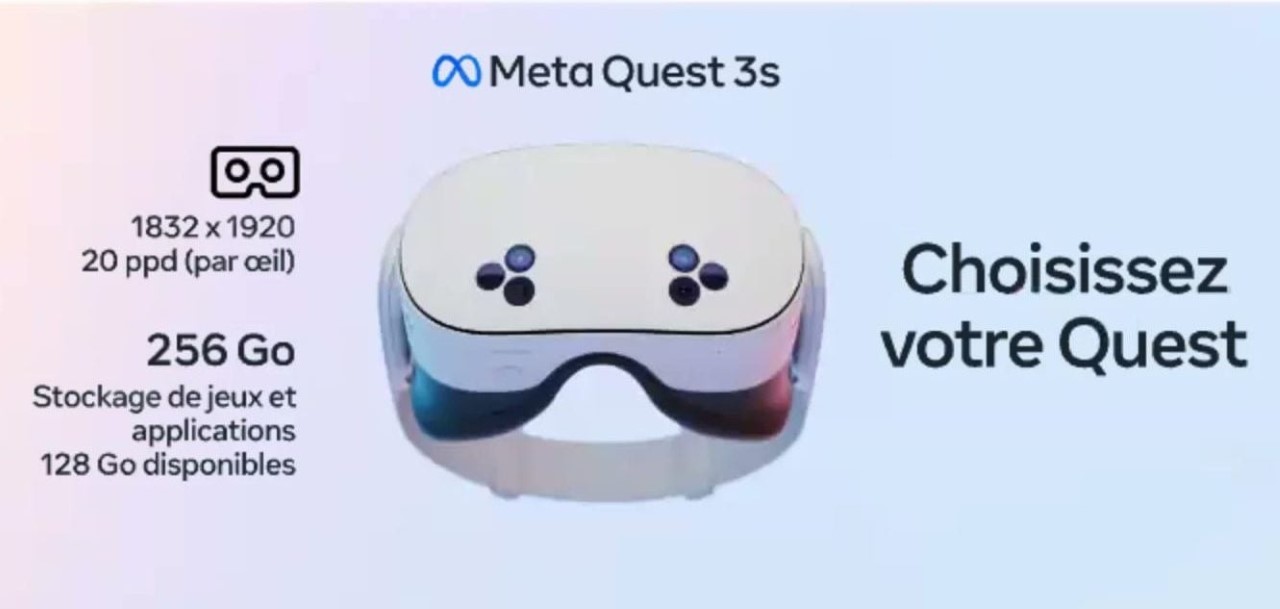

A leaked internal memo from May 2025, obtained by The New York Times, laid out the full scope: the feature is planned for every pair of Meta’s glasses, from Ray-Bans to the Oakley Meta HSTN sports line. Meta’s official response was a practiced non-denial: “we’re still thinking through options and will take a thoughtful approach if and before we roll anything out.” Companies that aren’t building something just say they’re not building it. Meta is not saying that.

The Database Was Being Built Before the Glasses Existed

Facebook turned on automatic photo tagging in 2010 with zero opt-in, and for eleven years, every time you tagged a friend’s face in a photo, you were feeding their facial recognition model. When Meta “deleted” over a billion faceprints in 2021 under lawsuit pressure, they kept the photos. They kept the social graph. They kept the engineers who built the whole thing. Name Tag isn’t a new product concept; it’s a previously mothballed capability getting a second run, this time with a camera on your face instead of a server in Menlo Park.

Anyone with a public Instagram account is immediately a potential target (it’s not like making your account private makes you any safer), which covers hundreds of millions of people who signed up to share photos, not to be enrolled in a real-world biometric identification system. Remember Portal, Meta’s smart home display with a face-tracking camera? It launched in 2018 right in the middle of the Cambridge Analytica fallout, and consumers collectively declined to put a Facebook camera in their living room. Meta discontinued it by 2022. The lesson they apparently took wasn’t “don’t build surveillance hardware.” It was “make sure the camera comes in wearing someone else’s face.”

They Know Exactly How We’ll React

“We will launch during a dynamic political environment where many civil society groups that we would expect to attack us would have their resources focused on other concerns.” That’s a sentence directly from an official internal planning document from Meta’s Reality Labs, dated May 2025, reviewed by The New York Times. The company was explicitly planning to exploit civic chaos as a launch window, timing the rollout of a mass surveillance feature to coincide with another crisis-event that occupies our mind so we’re distracted. Sleight of hand, with a dash of corporate evil. There’s no ethical framework in which that sentence represents good-faith product development.

Their original rollout plan was to debut Name Tag at a conference for the blind, wrapping a mass-surveillance tool in the language of accessibility before expanding it to the general public. That plan was eventually shelved, but the thinking behind it is the more revealing part. The accessibility framing was a softening mechanism, a way to generate human-interest coverage before the obvious misuse cases took over the conversation. Privacy advocates, abuse charities, and civil liberties groups were going to come for this feature regardless. The strategy was never to address their concerns. It was to buy a news cycle of goodwill first.

Your Face Is Being Reviewed in a Nairobi Office Park Right Now

Swedish newspapers Svenska Dagbladet and Göteborgs-Posten tracked Meta’s data pipeline from Ray-Ban glasses worn in Western homes to a company called Sama, operating out of an office park in Nairobi, Kenya. Workers there are paid to watch footage captured by glasses users and label what they see, teaching Meta’s AI to understand and interpret the visual world. The footage includes people on the toilet, naked bodies, couples in bed, bank card details accidentally filmed, and intimate conversations being had by people who had no idea they were being recorded, let alone reviewed by a contractor on another continent.

Meta’s defense was to point at a clause buried in their terms of service permitting “manual (human)” review of AI interactions, which is technically accurate and practically worthless as a justification, because no person buying a pair of fashion-forward smart glasses understands that clause to mean workers in Kenya are watching them undress. The April 2025 privacy policy update for the glasses silently expanded Meta’s right to use all captured photos, videos, and audio for AI training, with no prominent notification to existing owners. A class action lawsuit filed in San Francisco federal court in March 2026 argues this constitutes consumer fraud, given that Meta’s own marketing described the glasses as “designed for privacy, controlled by you.” The UK’s Information Commissioner’s Office wrote to Meta characterizing the situation as “concerning,” which in British regulatory language lands somewhere between “deeply troubled” and “genuinely alarmed.”

$2.1 Billion in Fines and Still Going

The fine history reads like a repeat offender’s rap sheet. Meta paid $650 million to settle an Illinois class action over collecting facial geometry without consent through Facebook’s “Tag Suggestion” feature. They paid another $68.5 million for the same BIPA violation in 2023. In 2024, Texas extracted $1.4 billion from them for capturing biometric data on millions of Texans “for commercial purposes” without informed consent, with the lawsuit specifically alleging Meta was disclosing that data for profit. That’s over $2.1 billion in biometric privacy penalties across four years, all for variations of the same violation, against the same company, building the same technology.

None of it changed the product roadmap. The Texas settlement of $1.4 billion represents roughly one percent of Meta’s $134 billion in 2023 revenue. The Electronic Privacy Information Center has filed complaints with the FTC calling Name Tag a direct facilitator of “stalking, harassment, doxxing and worse.” The EU’s AI Act classifies real-time remote biometric identification in public spaces as high-risk AI and prohibits it for most commercial applications. The fines and the regulatory pressure are clearly baked into Meta’s planning rather than functioning as deterrents. They paid $2.1 billion to establish what a decade of biometric data collection actually costs, looked at that number next to their revenue, and decided it wasn’t a fine. It was an investment.

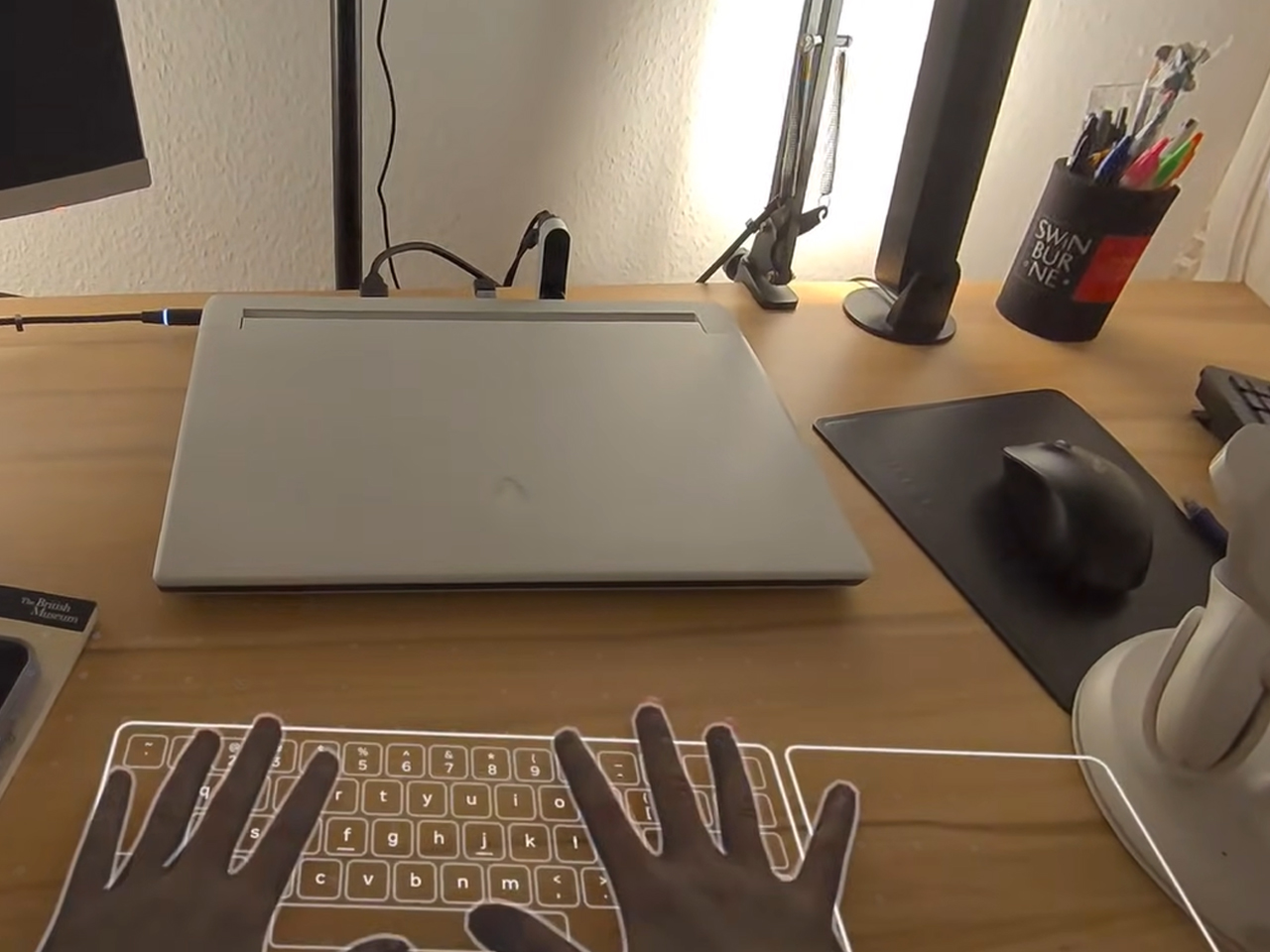

The Glasses Are Just the Beginning

Name Tag as currently designed still requires the wearer to deliberately trigger an identification query. The next product removes even that minimal friction. Internal documents describe “super sensing” glasses with always-on cameras and microphones that record continuously for the entire duration they’re worn, feeding an unbroken stream to an AI assistant that builds a fully searchable log of the wearer’s day. The surveillance model shifts from opt-in query to permanent ambient default. Every person who passes within the glasses’ field of view gets their face processed, regardless of whether they’ve opted out, regardless of whether they even know the technology exists.

The threat model was demonstrated in 2024 by two Harvard students, AnhPhu Nguyen and Caine Ardayfio, using nothing but current, available hardware. They connected Ray-Ban Meta Gen 2 glasses to PimEyes, a commercial facial recognition engine, alongside LLM data extraction tools, FastPeopleSearch, and Cloaked.com for social security lookups. Streaming the feed to Instagram Live, they identified strangers on the Boston subway and pulled names, home addresses, phone numbers, and social security numbers in seconds. They approached a woman on the street, told her they’d met at a Cambridge Community Foundation event, and she believed them. They told a female student her Atlanta home address and her parents’ names; she confirmed they were right. Name Tag doesn’t make this possible. It already is possible. Name Tag just makes it Meta’s official product.

What “Opt-Out” Actually Means

Meta’s proposed safeguards rely on limiting identification to connected contacts or public accounts, and offering an opt-out toggle buried in Instagram settings. The connected-contacts restriction doesn’t address the most statistically common danger. Stalkers, abusers, and harassers overwhelmingly target people they already know. Limiting the feature to existing connections doesn’t reduce the risk to the most vulnerable users; it focuses it on them. Domestic abuse charities in the UK raised this point directly, noting that abusers could use Name Tag to locate survivors who have relocated, changed their appearance, or created entirely new digital identities to stay safe.

The opt-out toggle is available to Instagram’s roughly 2 billion monthly active users, almost none of whom will encounter it organically. Privacy protections that require the potential victim to proactively locate and activate a setting are not privacy protections. They are liability documentation. Abuse survivors, journalists, political dissidents, undocumented individuals, people in witness protection: these are the people with the highest stakes, and also the people with the least bandwidth to hunt through app settings on the off chance that facial recognition has been added to a device they don’t even own. The toggle protects Meta in a courtroom. It protects its users in no meaningful sense at all.

We Were Free Labor All Along

Twenty years of tagging photos, liking posts, following accounts, and uploading selfies. Every interaction trained the model. Every tagged face sharpened the database. Meta framed all of it as self-expression and social connection, and it was, but it was also free labor on the world’s largest biometric mapping project. The glasses are the hardware layer that connects that digital registry to the physical world. The data collection phase is largely complete. The deployment phase is now.

Reddit ran the same playbook with text and nobody stopped them either. In early 2024, Reddit signed a $60 million-per-year deal with Google to license user-generated content for AI training, then struck a separate deal with OpenAI estimated at $70 million annually. Two decades of forum posts, niche expertise, personal advice, and community-built knowledge that users created for each other got packaged and sold to the highest bidder. Users built the database. Reddit sold it. The users got nothing except the knowledge that their words now live inside a model they don’t control. Meta’s version is identical in structure and more intimate in substance, because the asset being extracted isn’t something you typed. It’s your face, your home, and the faces of everyone in your immediate vicinity.

While all of this unfolds on the hardware and data side, Meta is simultaneously stripping privacy from the software side. End-to-end encryption for Instagram DMs dies on May 8, 2026. Meta’s stated justification is that “very few people” were using it, which is a direct consequence of never making it the default and never promoting it. After May 8, Meta retains full technical access to message content, which means any contractor, government request, or legal process with sufficient leverage can access it too. The feature was specifically extended to users in Ukraine and Russia during the war as a safety measure for people in genuine danger. Those users are now being told to download their chats before the cutoff. The facial recognition is the front door. The unencrypted message access is the unlocked safe. At some point the question stops being “is Meta building a surveillance company?” and starts being “why are we still acting like it isn’t one?”

The post Meta Is Turning Its Smart Glasses Into A Mass Surveillance Tool… And You Can’t Stop It first appeared on Yanko Design.