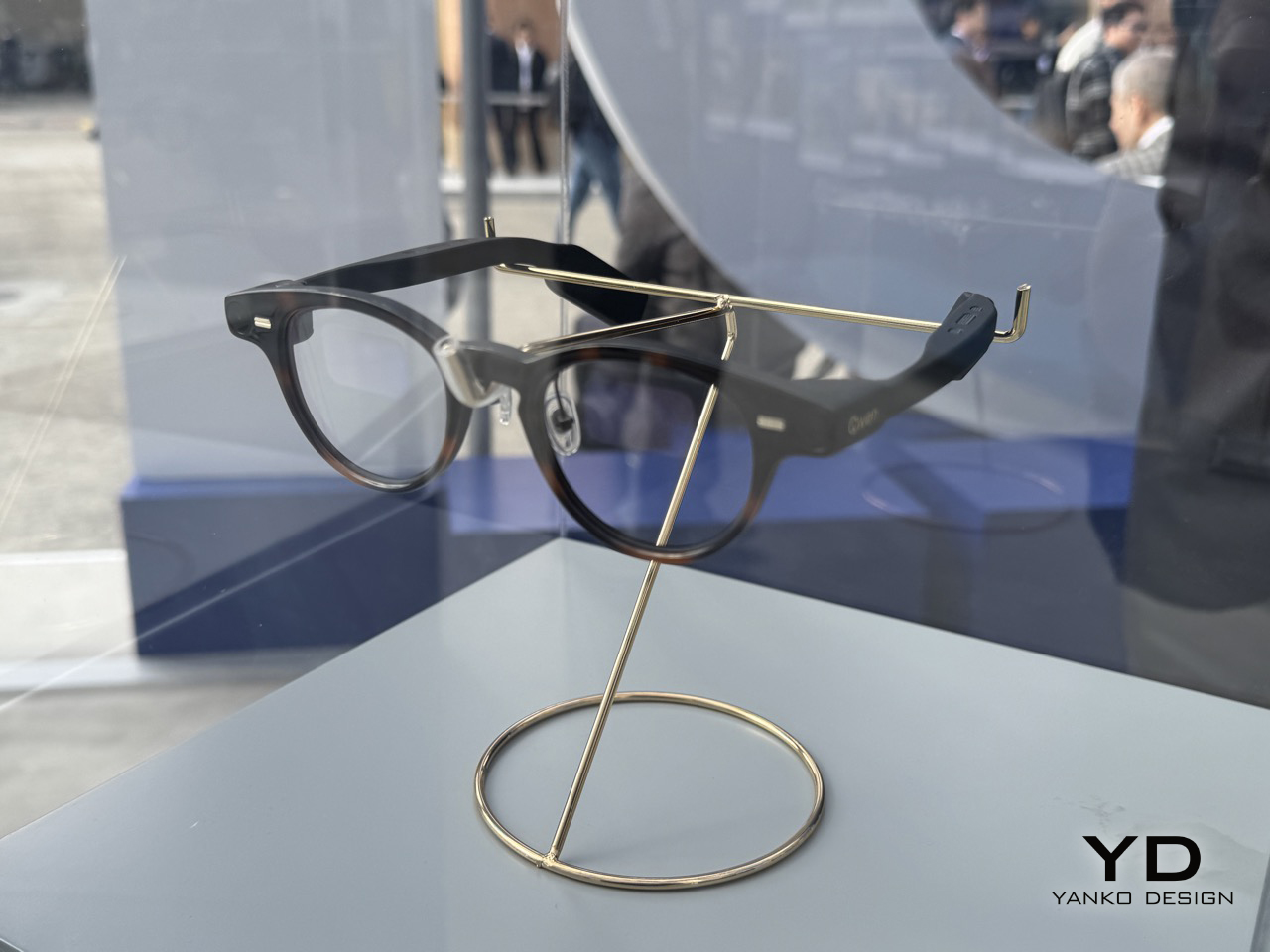

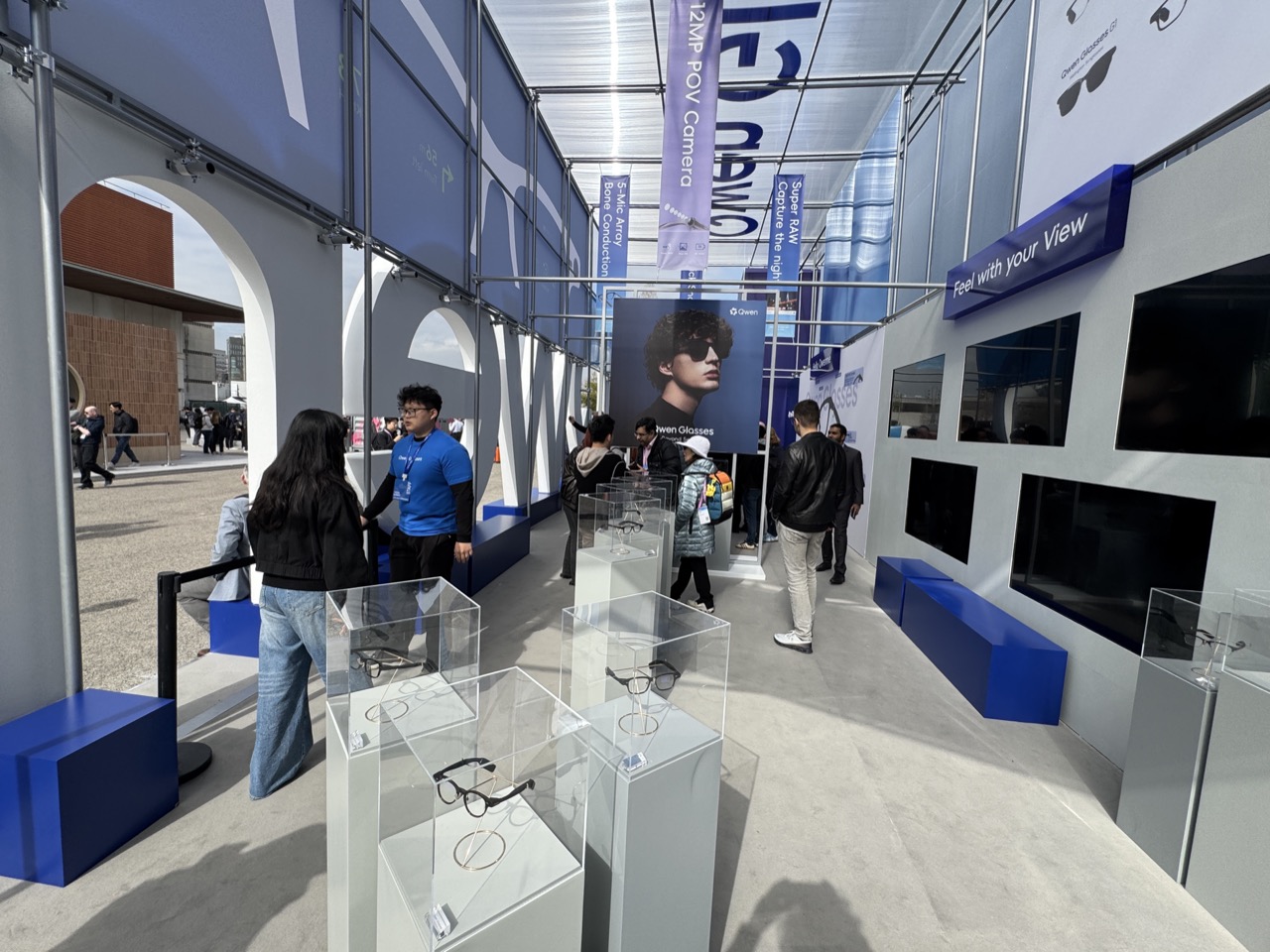

Every year, MWC arrives with the promise of seeing the future of mobile technology, or at least a very expensive approximation of it. The 2026 edition in Barcelona was the event’s 20th anniversary in the city, and while nearly 105,000 people showed up, there was a noticeable shift in what filled the booths. Fewer headline-grabbing product launches, more working concepts and proofs of concept across every category imaginable.

That’s not necessarily a bad thing. When manufacturers stop competing on a single spec and start showing what they’re thinking about next, the underlying patterns get easier to read. Five trends cut across product categories at MWC 2026, crossing from smartphones to laptops to robotic companions. None of them belongs to one company, and none of them is going away anytime soon.

Robots got a size reduction

For the past couple of years, humanoid robots have been stealing the show at tech events. They walk, they wave, they occasionally fall over, and everyone takes a video. The problem is that a bipedal robot that can fetch a package from across the room is not something most people actually need sitting in their office. MWC 2026 suggested the industry might be starting to figure that out.

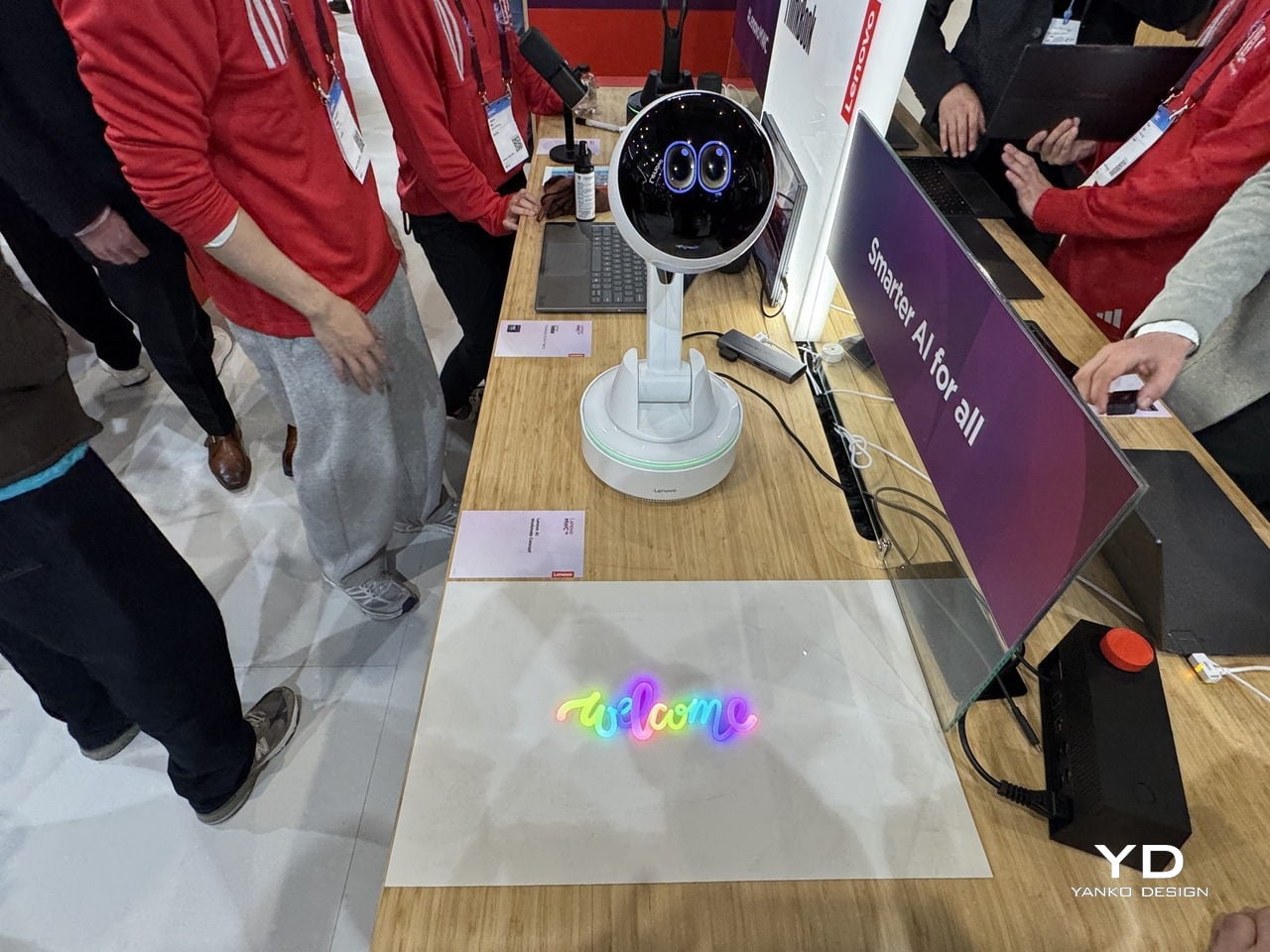

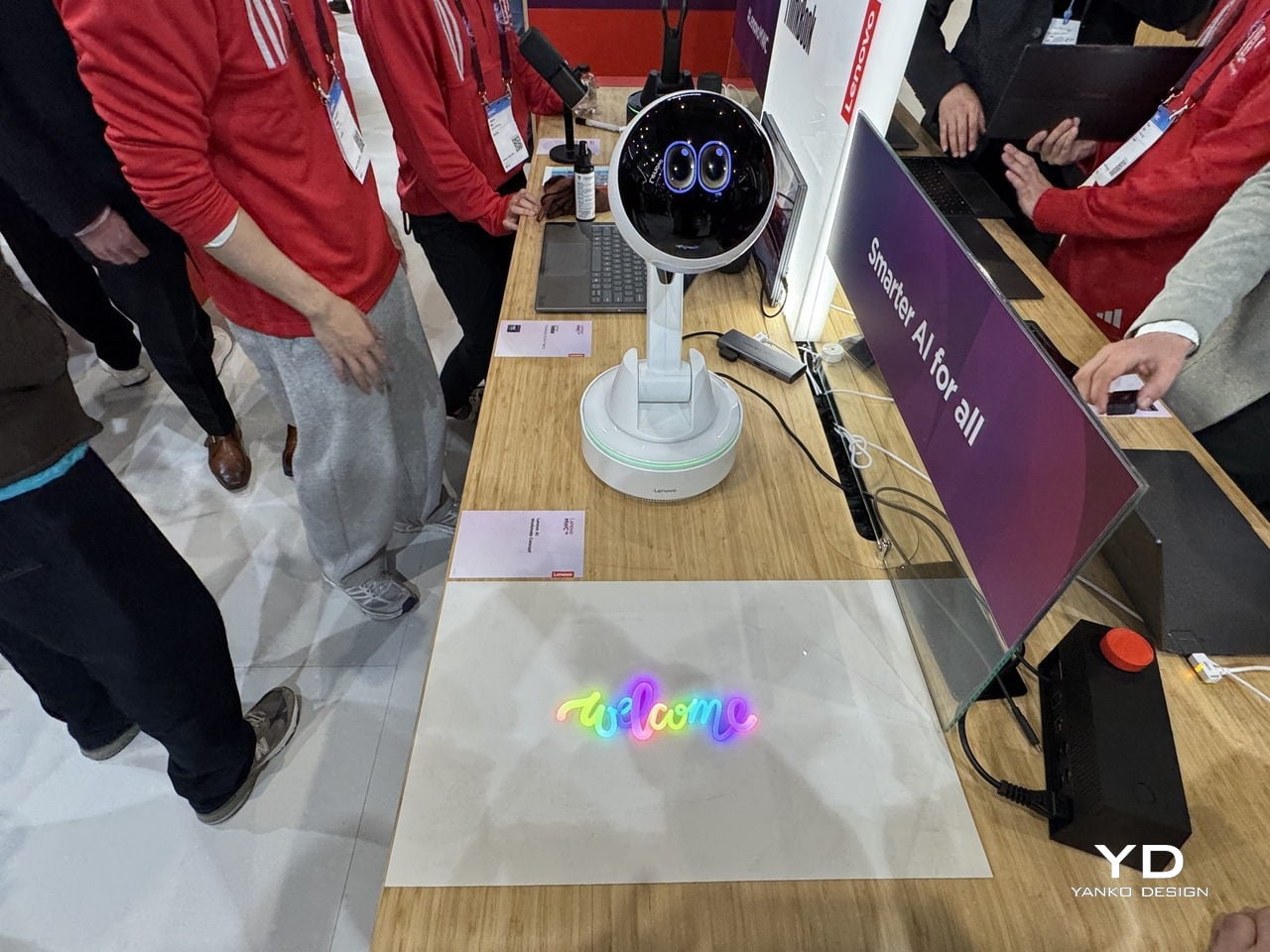

The robots worth talking about this year were small, desk-bound, and refreshingly honest about what they could do. Lenovo’s AI Workmate Concept is a desk-mounted unit that handles document scanning, note organization, and presentation help through voice, gesture, and spatial interaction, processing everything on-device. It can even project content onto your desk or a nearby wall, which sounds gimmicky until you think about how useful a hands-free reference surface actually is during a meeting.

Samsung Display’s OLED AI Mini PetBot takes the idea in a more playful direction. It is a pocket-sized robot with a 1.34-inch circular OLED screen for a face, reacting to voice and touch with animated expressions. It comes from Samsung’s display division rather than its product team, so this is less a product announcement and more a demonstration of where the panel technology can go.

AI is learning to show its feelings

Most people’s experience of AI right now involves typing into a box and getting text back, or asking a question into empty air and hearing a voice that sounds like it was recorded in a server room. It works, but it does not feel particularly warm. A cluster of products at MWC 2026 was specifically trying to fix that, not by making AI smarter, but by making it more expressive.

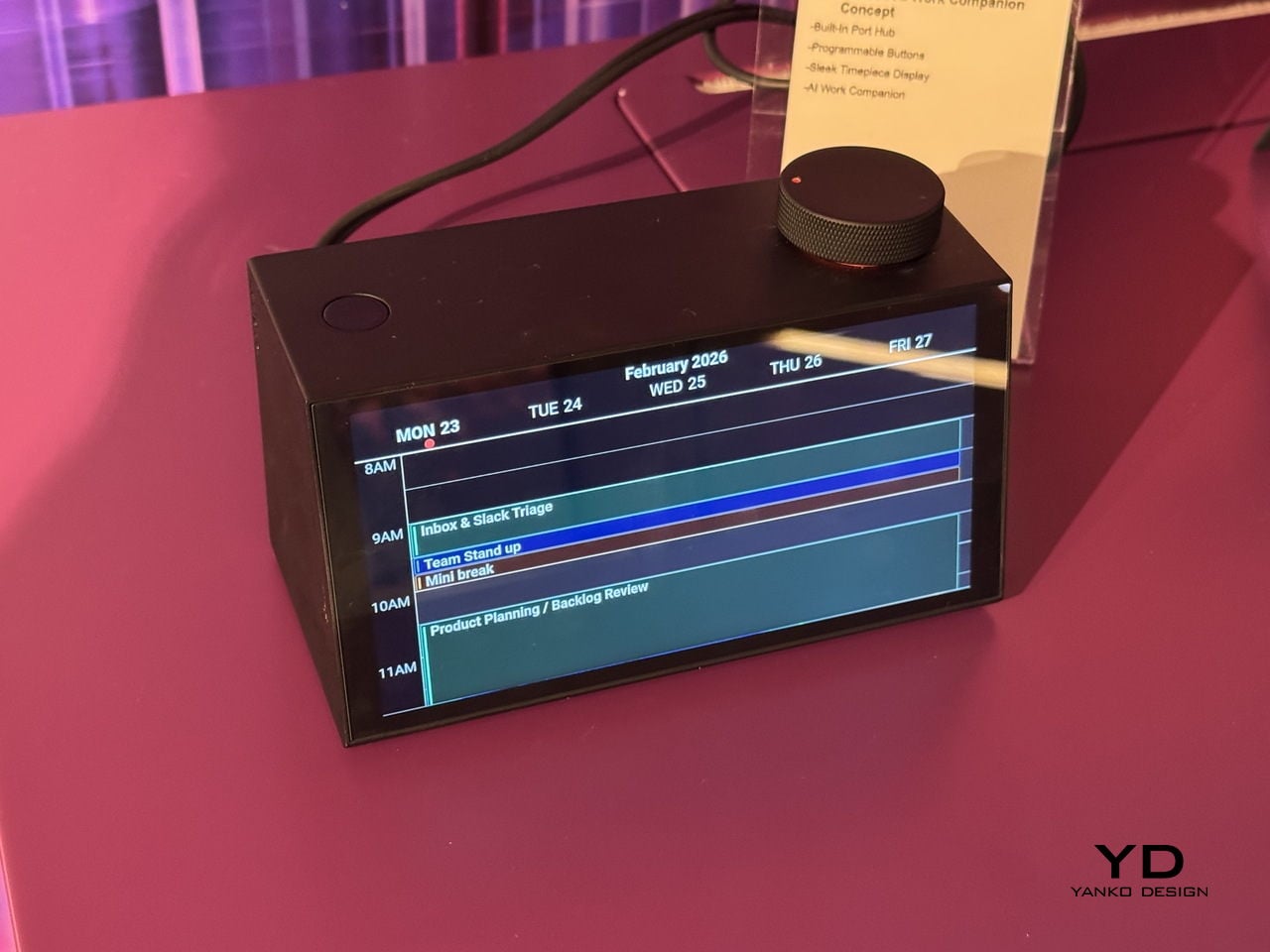

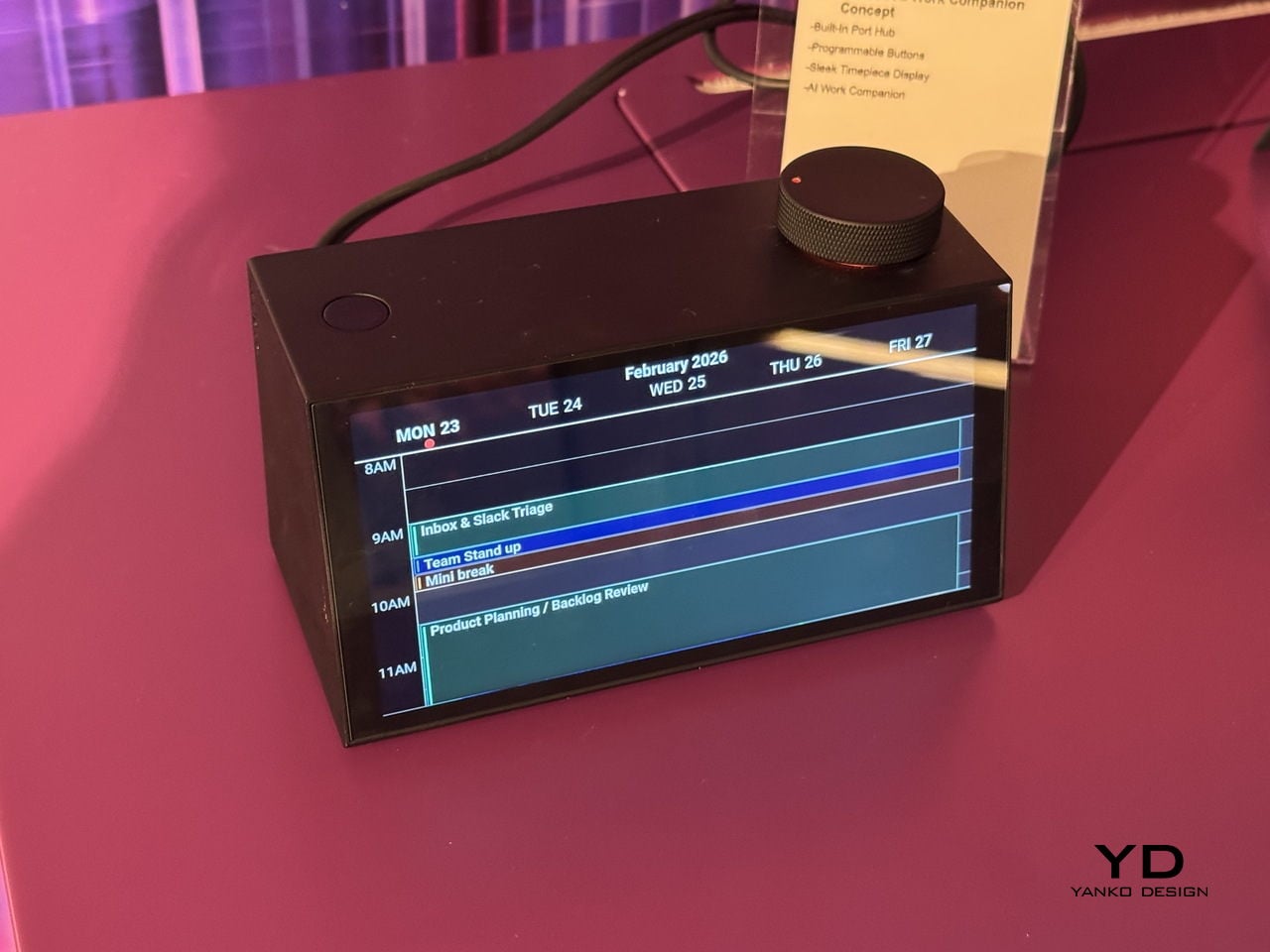

Lenovo’s AI Work Companion Concept looks like a desk clock, which is either a clever disguise or a statement about how unobtrusive AI should be. Its AI planning system, called Thought Bubble, syncs tasks and schedules from across your devices to build a daily plan, monitors screen time, nudges you to take breaks, and delivers an end-of-week summary of what you actually got done. The behavioral framing is deliberately light. The goal is to build a rhythm rather than manage a list, and the device is designed to feel like a presence in your workspace rather than another notification surface.

TCL’s Tbot takes a similar approach for a younger audience. It pairs with the company’s MOVETIME kids smartwatch, so when a child gets home and drops the watch onto Tbot’s magnetic dock, the robot comes to life as a study companion and bedtime storyteller. The physical handoff is a considered design decision, a tangible trigger rather than an app to open.

Honor’s Robot Phone extends the idea into the phone itself. A motorized titanium alloy gimbal arm holds a 200-megapixel camera that nods when it agrees, shakes when it doesn’t, and tracks you across the room. Honor plans to sell it in the second half of 2026, which means it will be the first of this particular batch of emotionally expressive AI devices to actually land in someone’s hands.

Modular design, this time as a practical argument

Modular phones have been promised before: Project Ara, LG G5, and Fairphone at various stages of their evolution. The pitch is always appealing: buy a base device, then upgrade the camera, swap the battery, add what you need. The reality has usually involved awkward connectors, software that doesn’t quite work, and products that disappear within two years. MWC 2026 had a notable cluster of modular devices, and what made them interesting is that each was solving a different version of the problem.

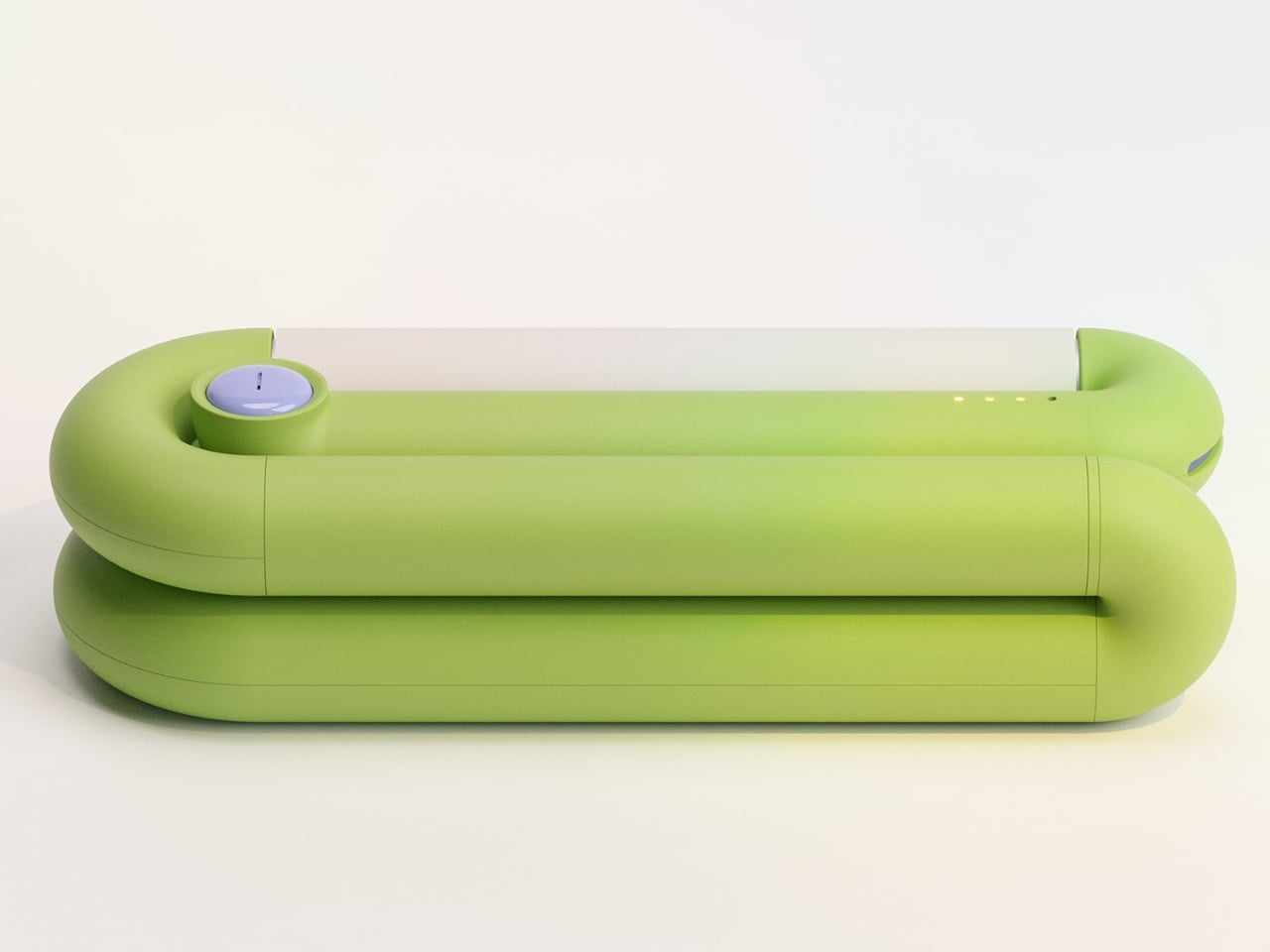

Lenovo’s ThinkBook Modular AI PC Concept approaches it from the laptop side. The 14-inch base connects to a secondary screen via pogo pins, and that screen can sit alongside the base as a travel monitor, mount on the lid for face-to-face sharing, or replace the keyboard to create a dual-display setup. Interchangeable I/O ports, covering USB Type-A, USB Type-C, and HDMI, mean the connection layout changes with the workflow. It’s a concept aimed at professionals who spend their day switching between contexts, and the argument is about longevity and flexibility rather than upgradeability for its own sake.

TECNO’s Modular Magnetic Interconnection Technology works from the phone outward. The base device is 4.9mm thick, which is thinner than anything Apple or Samsung currently sells, and that extreme thinness turns out to be the point. Modules, including telephoto lenses, battery packs, microphones, wallets, and speakers, attach magnetically to the rear without making the phone ungainly.

Ulefone’s RugOne Xsnap 7 Pro is less elegant but arguably more practical: a rugged phone whose rear camera detaches and operates independently as a wearable action camera. Three very different products, three different price tiers, and the same underlying idea. A device you can reconfigure is a device you keep longer.

The keyboard is making a serious case for itself

BlackBerry’s demise was supposed to be the end of physical keyboards on phones. Touch screens were better, the argument went, because they could be anything. And they were right, mostly. But they were also cold, imprecise for fast typing, and they ate half your screen every time you needed to type more than a sentence. A small but persistent group of users never fully made peace with that trade-off, and in 2026, they suddenly had options.

The Unihertz Titan 2 Elite was at MWC with a 4.3-inch AMOLED display at 120Hz above a physical QWERTY keyboard with touch-sensitive keys that also function as a trackpad. The aluminum body and slimmed-down proportions mark a clear departure from the chunky, ruggedized aesthetic of earlier Titan phones. This one is trying to look like something you would actually carry every day.

The Clicks Communicator comes from the opposite direction: Clicks already makes keyboard cases for iPhones, and the Communicator is a logical next step, a standalone Android phone built around the companion philosophy for people who want physical keys without abandoning modern smartphone basics.

The iFROG RS1 is the strangest and most interesting of the three. It is a square phone with a 3.4-inch display that sits on top of a rotating lower section. Twist it one way, and you get a full QWERTY keyboard with tactile keycaps. Twist it the other way, and you get a gamepad with a D-pad and face buttons, which unavoidably recalls the Game Boy and the Motorola Flipout in equal measure. What all three of these share is a belief that tactile input has genuine ergonomic value that glass surfaces haven’t replaced, just obscured. Whether that belief translates into mainstream sales is a different question.

Design became the headline spec

Phones have always been designed objects. But for most of the last decade, the design conversation at launch events came after the camera specs, after the processor benchmark, after the battery capacity. At MWC 2026, a handful of manufacturers flipped that order. The design was the lead, and everything else followed.

Honor’s Magic V6 is the most straightforward example. At 8.75mm closed, it is one of the thinnest foldables on the market, and Honor announced that measurement with the same emphasis as a performance figure might receive. The engineering behind it is genuinely impressive: IP68 and IP69 water resistance on a foldable, combined with a 6,660mAh silicon-carbon battery, means thinness was not achieved by sacrificing durability or endurance. It’s a difficult combination, and the design is doing real work to make it possible rather than just looking good on a spec sheet.

The CMF collaborations told a different story about design as positioning. Infinix’s NOTE 60 Ultra, developed with Pininfarina, applied the Italian studio’s automotive logic to the phone’s rear panel. The result is a single continuous sheet of Gorilla Glass Victus covering the triple camera array, a thin floating taillight strip, and a hidden active matrix notification display, all completely flush. No bump. The colorways, Torino Black, Monza Red, Amalfi Blue, and Roma Silver, are not accidental.

TECNO’s partnership with Tonino Lamborghini produced the TAURUS gaming PC, a water-cooled mini system with a 10,000mm² copper cold plate, and the POVA Metal phone, whose 241-pixel rear LED dot matrix turns the notification surface into a deliberate design feature. At the concept end, TECNO’s POVA Neon filled its rear panel with ionized inert gas to produce plasma patterns that chase your fingertip across the glass, which is either the most impractical phone feature ever conceived or a fascinating question about what a phone’s surface is actually for.

The Lenovo Yoga Book Pro 3D lets 3D creators sculpt directly on a dual-screen laptop without additional hardware. The Motorola Maxwell AI pendant turned conference transcription into something you wear around your neck. None of these are shipping products. At MWC 2026, that seemed less like a limitation and more like the whole point: showing what you think design can do, before you have to prove it.

The post 5 Wildest Design Trends at MWC 2026: Nodding Phones and Tiny Robots first appeared on Yanko Design.