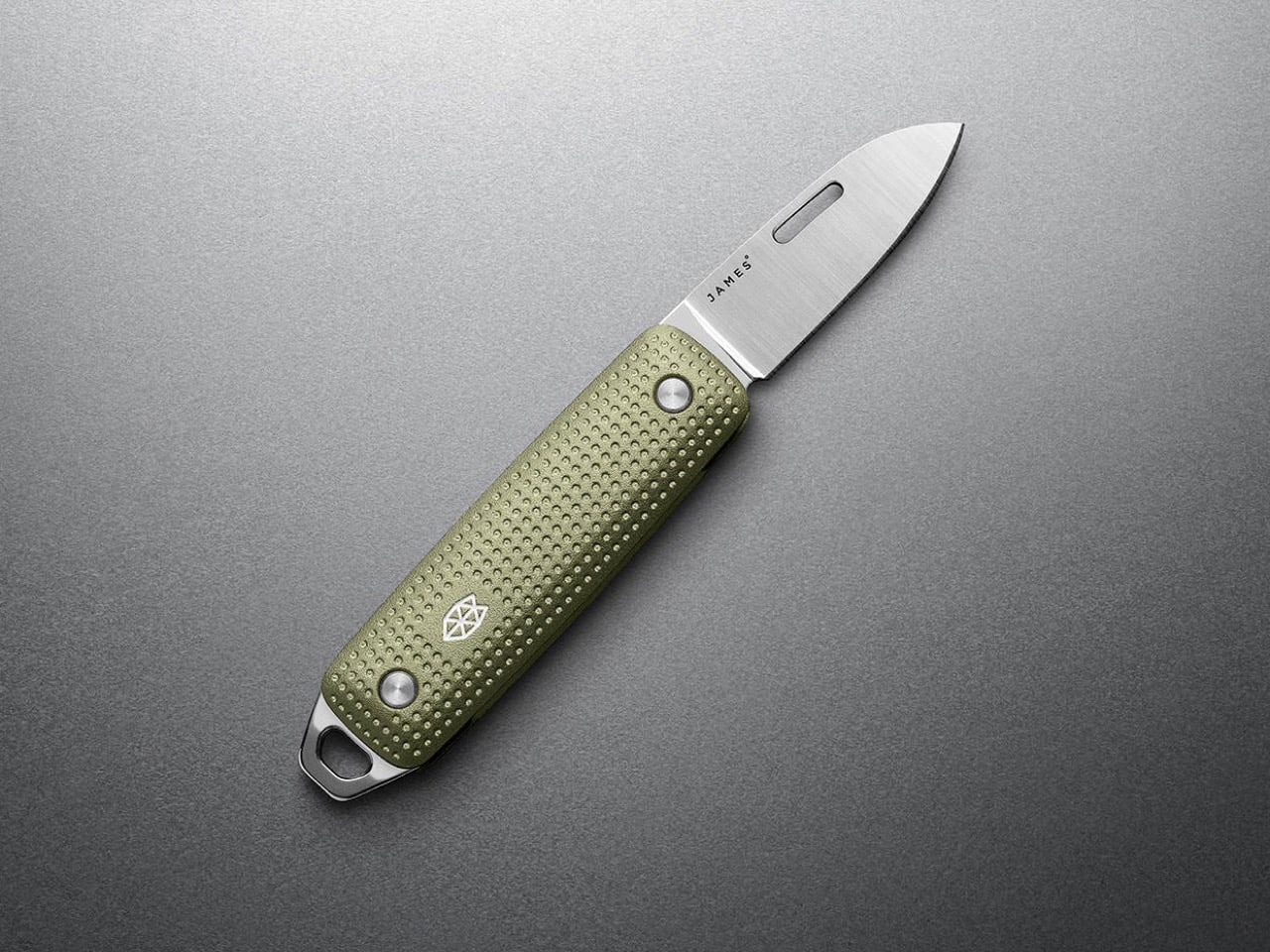

Refinement in knife design can mean two different things. Sometimes it means polishing the details on an already-successful platform, smoothing out the rough edges and tweaking the ergonomics until the product feels 5% better across the board. Other times it means stripping the design down to its founding idea and rebuilding it with better materials, tighter tolerances, and a clearer sense of what the knife is actually supposed to do in someone’s pocket. The James Brand took the second path with the Elko Gen 2, keeping the original’s core identity as a compact, non-threatening, legally unambiguous keychain blade while re-engineering nearly everything else. Machined aluminum handles replace the acetate and titanium options from the first generation, bringing a raised dot-matrix texture that wraps the entire surface. The slip-joint mechanism, nail-nick deployment, and sub-3-inch closed length remain untouched because those were the decisions that made the original Elko work in the first place.

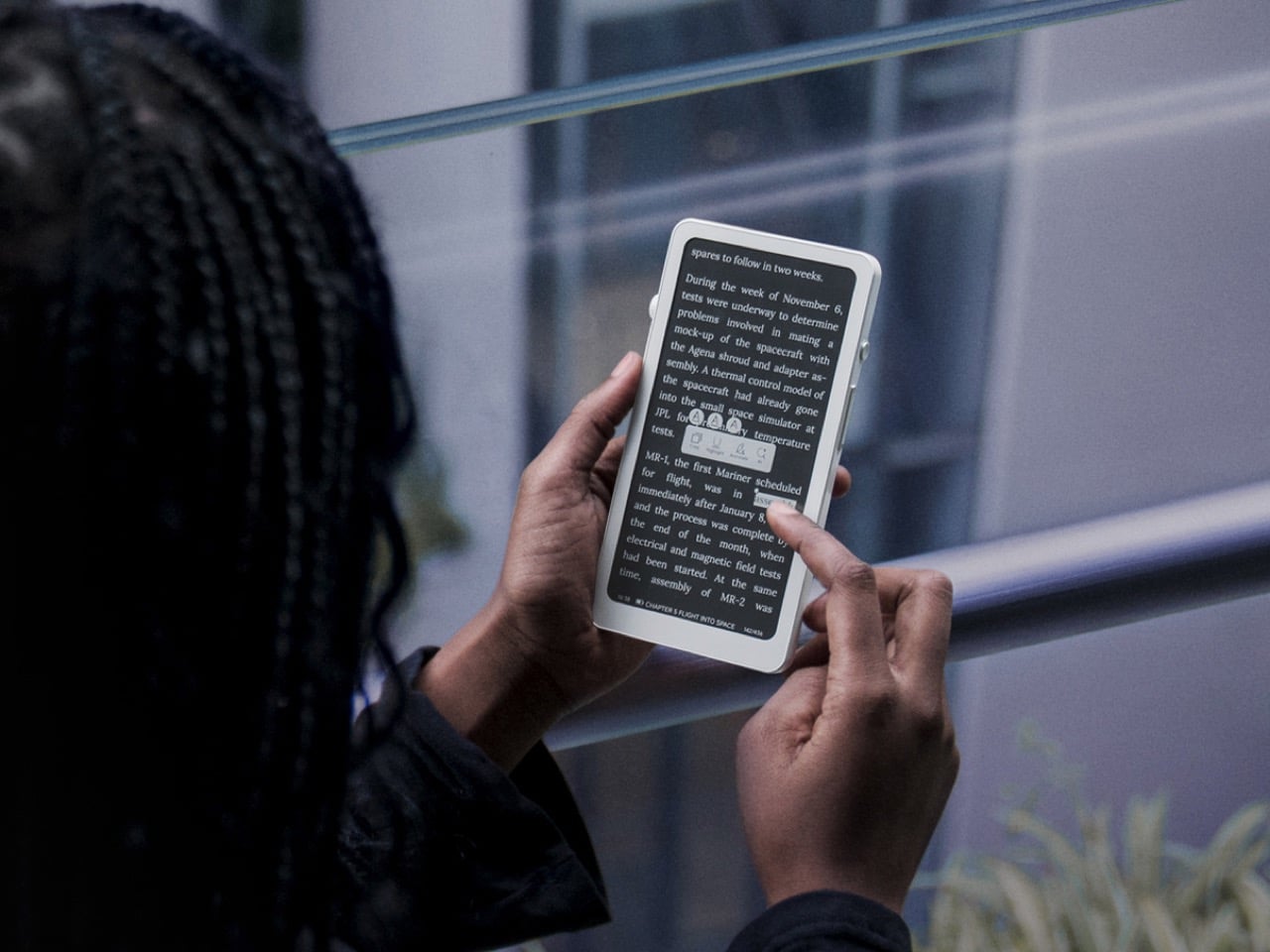

Sandvik 12C27 stainless steel drives the cutting performance, a Swedish alloy that holds an edge well above its price class and resists corrosion in ways that matter when a knife lives on a keychain exposed to sweat, rain, and pocket lint. The blade measures 1.6 inches with a drop-point profile, short enough to avoid intimidating coworkers but long enough to handle the micro-tasks that define daily carry: packages, tags, threads, tape. Four anodized aluminum colorways span the Gen 2 lineup, from the monochrome Black + Black to the warmer Black + Fire variant with its brass-toned scraper accent. That scraper, called the All Things tool, functions as a pry bar, bottle opener, and flathead screwdriver while doubling as the attachment point for the included titanium key ring. The James Brand is pricing the Gen 2 at $65, a number that sits comfortably in the zone where people actually carry and use their knives instead of storing them in a drawer.

Designer: James Brand

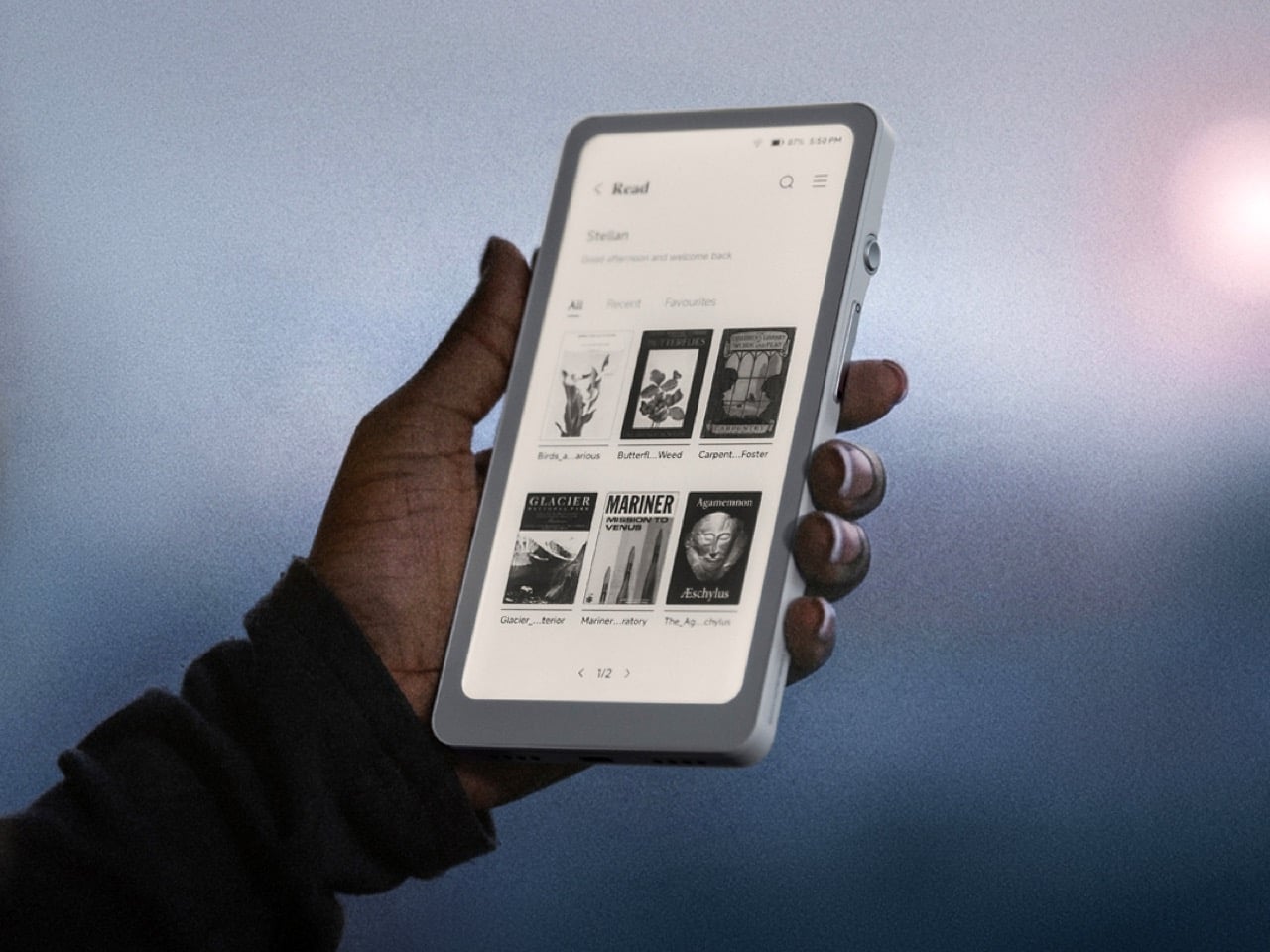

The weight tells you everything about what changed between generations. The original Elko clocked in at 1.3 ounces, light enough to disappear completely on a keychain and occasionally feel insubstantial in hand during actual use. The Gen 2 hits 3.5 ounces, a nearly three-fold increase driven entirely by the shift to CNC-machined aluminum handles. That extra heft registers immediately when you pick it up, transforming the knife from something you forget you’re carrying into something that feels deliberately present without crossing into burdensome. The raised dot-matrix texture across the handle faces amplifies that sense of solidity. Each dimple is uniform and precisely machined, creating a grip surface that works without resorting to aggressive jimping or rubberized inserts. It’s the kind of detail that separates a thoughtfully executed product from one that just checks spec boxes.

The slip-joint mechanism operates with the kind of snap you’d expect from a knife twice this size. There’s no lock here, which keeps the Elko legal in jurisdictions where locking blades trigger stricter carry laws, but the spring tension holds the blade open firmly enough that it won’t fold during normal cutting tasks. The nail nick is slotted longer than most compact knives bother with, making it easy to catch with a thumbnail even if you’re working quickly or wearing gloves. Opening the blade feels deliberate in a way that thumb studs and flippers sometimes don’t, a tactile ritual that reminds you you’re deploying an edge rather than flicking a fidget toy. Closed, the knife measures 2.6 inches, which makes it shorter than a standard tube of ChapStick and small enough to coexist on a keychain with a car fob, house keys, and a carabiner organizer without turning the whole setup into a pocket brick.

The All Things scraper at the butt end pulls more weight than most integrated tools on keychain knives. The brass-toned version on the Black + Fire colorway is particularly striking, a warm accent that contrasts sharply against the PVD-coated black blade and anodized black aluminum. Functionally, it’s wide enough to catch a bottle cap, thin enough to slot into most flathead screws, and sturdy enough to pry open a paint can lid without bending. The titanium key ring threads directly through the scraper, creating a clean attachment point that doesn’t require a separate lanyard hole or awkward clip orientation. In practice, this means the Elko hangs naturally on a carabiner or split ring without the blade rattling loose or the scales scratching against your keys. The Grove + Stainless colorway leans more understated, pairing an army green anodized finish with a brushed satin blade and stainless scraper that reads almost utilitarian. Black + Stainless offers the most versatile aesthetic, the kind of knife that doesn’t announce itself visually but still looks intentional when you pull it out to open a package in a meeting.

The Elko Gen 2 competes in a category that’s crowded with compromises. Most keychain knives either go too light and feel like toys, or pack in unnecessary features that bloat the form factor beyond what a keychain can reasonably support. The Benchmade Proper series offers superior blade steel and build quality, but at nearly double the price and with a larger closed footprint. Victorinox’s 58mm Swiss Army Knives deliver more tools in a similar package, but sacrifice blade length and lockup in the process. The Elko stakes out the middle ground: a single-purpose blade with one genuinely useful integrated tool, built well enough to last years but priced accessibly enough that you won’t hesitate to actually use it. It’s a knife designed to live on your keys, get deployed daily, and still feel like a deliberate choice five years from now rather than something you’ve been meaning to replace.

The post The James Brand Just Rebuilt Its Best Keychain Knife from Scratch first appeared on Yanko Design.