Nestled within the Chilean Andes, the new Atacama Large Millimeter-submillimeter Array (ALMA) is now open for space-staring business. The biggest, most complex telescope project to date, ALMA will be able to peer into the deeper reaches of space with "unprecedented power", according to astronomer Chris Hadfield. Covering around half of the universe's light spectrum, between infrared and radio waves, the new telescope should be able to detect distant planets, black holes and other intergalactic notables.

The Chilean desert's lack of humidity was a big reason for the telescope's placement, 16,400 feet above sea-level, aiding precision of the scope. But it's a global project, with the US contributing $500 million and making it the NSF's biggest investment ever. From Japan, Fujitsu's contribution to exploring the final frontier consists of 35 PRIMERGY x86 servers, tied together with a dedicated (astronomy-centric) computational unit. The supercomputer will process 512 billion telescope samples per second, which ought to be more than enough to unlock a few more secrets of the cosmos.

Filed under: Science, Alt

Comments

Via: PopSci

Today on In Case You Missed It: A new parking structure will autonomously park cars without a single human's assistance. Meanwhile Colorado School of Mines is testing small-scale water treatment plants that could be used in neighborhoods rather t...

Today on In Case You Missed It: A new parking structure will autonomously park cars without a single human's assistance. Meanwhile Colorado School of Mines is testing small-scale water treatment plants that could be used in neighborhoods rather t...

Today on In Case You Missed It: A new parking structure will autonomously park cars without a single human's assistance. Meanwhile Colorado School of Mines is testing small-scale water treatment plants that could be used in neighborhoods rather t...

Today on In Case You Missed It: A new parking structure will autonomously park cars without a single human's assistance. Meanwhile Colorado School of Mines is testing small-scale water treatment plants that could be used in neighborhoods rather t...

The US Federal Aviation Authority (FAA) has been very cautious about drone testing in the US so far, but that's about to change. The FAA has granted Google permission to test its Project Wing delivery services below 400 feet at six sanctioned test si...

The US Federal Aviation Authority (FAA) has been very cautious about drone testing in the US so far, but that's about to change. The FAA has granted Google permission to test its Project Wing delivery services below 400 feet at six sanctioned test si...

President Obama declared June 17 to the 23rd to be the National Week of Making, and what better way to celebrate than funding research for kids? The National Science Foundation (NSF) created a $1.5 million "early-concept grant" for five youth-oriente...

President Obama declared June 17 to the 23rd to be the National Week of Making, and what better way to celebrate than funding research for kids? The National Science Foundation (NSF) created a $1.5 million "early-concept grant" for five youth-oriente...

Today on In Case You Missed It: NASA thinks it can extend the life of even dead satellites orbiting Earth with a new solution from the agency and Orbital ATK. Mission Extension Vehicles should go up in 2018 and give the sats battery power for as lo...

Today on In Case You Missed It: NASA thinks it can extend the life of even dead satellites orbiting Earth with a new solution from the agency and Orbital ATK. Mission Extension Vehicles should go up in 2018 and give the sats battery power for as lo...

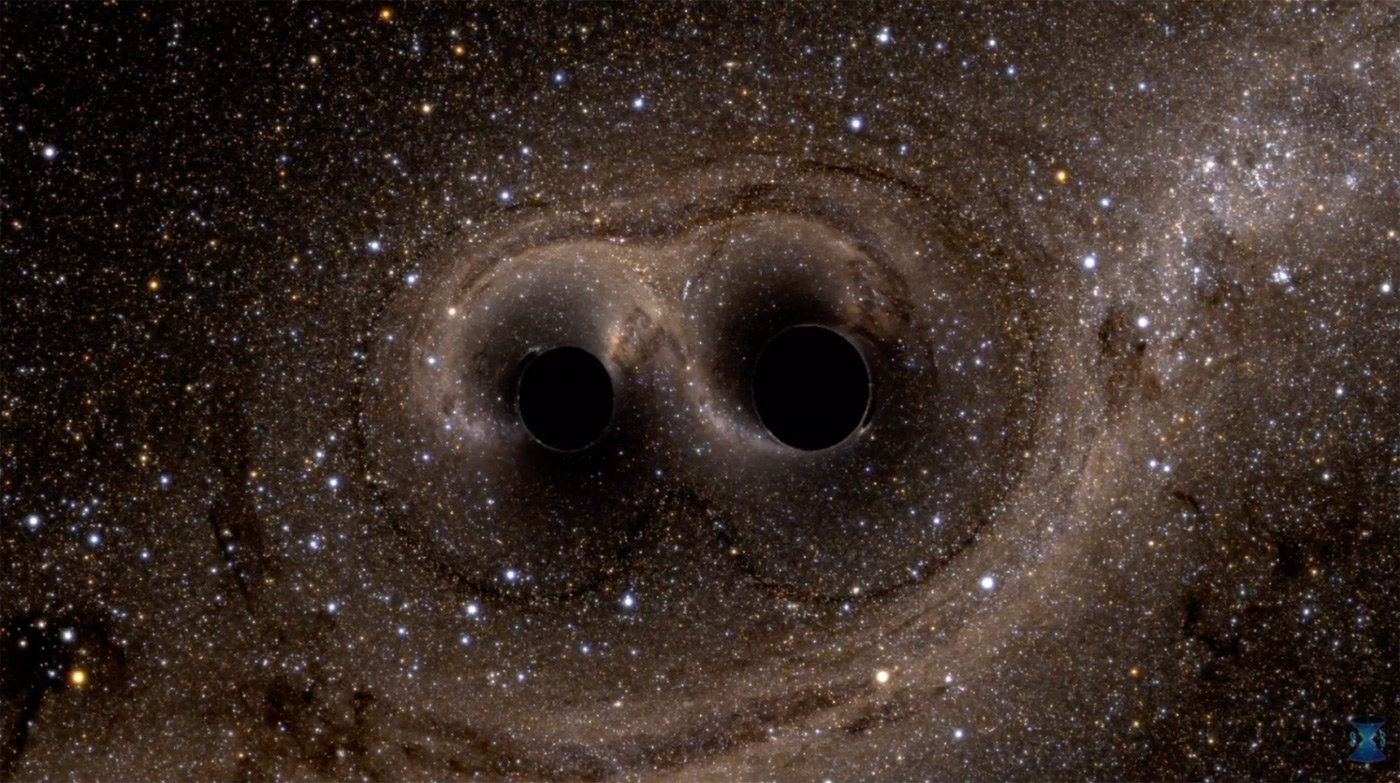

"We have detected gravitational waves. We did it," David Reitze, executive director of the Laser Interferometer Gravitational-Wave Observatory (LIGO), said at a press conference in Washington on Thursday. Reitze has good reason to be excited. LIGO's...

"We have detected gravitational waves. We did it," David Reitze, executive director of the Laser Interferometer Gravitational-Wave Observatory (LIGO), said at a press conference in Washington on Thursday. Reitze has good reason to be excited. LIGO's...

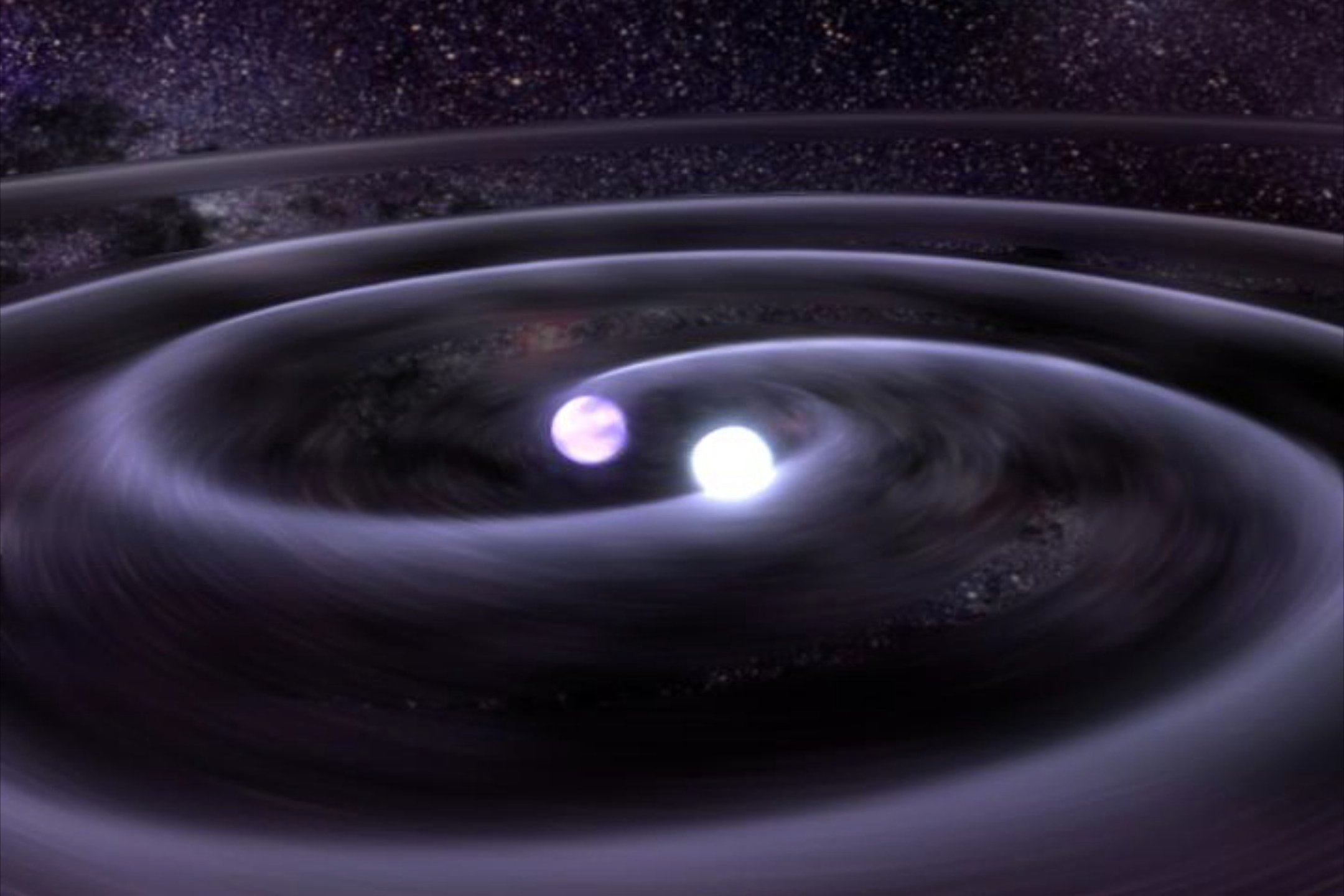

At last, scientists have validated a key part of Einstein's general theory of relativity. The National Science Foundation, Caltech and MIT have confirmed the existence of gravitational waves, or ripples in spacetime. Their two LIGO (Laser Interfero...

At last, scientists have validated a key part of Einstein's general theory of relativity. The National Science Foundation, Caltech and MIT have confirmed the existence of gravitational waves, or ripples in spacetime. Their two LIGO (Laser Interfero...