Sure, you love the HDR pictures coming from your point-and-shoot, smartphone or perhaps even your Glass. But what if you want to Hangout in HDR? An enterprising grad student from the University of Toronto named Tao Ai -- under the tutelage of Steve Mann -- has figured out how to shoot HDR video in real-time. The trick was accomplished using a Canon 60D DSLR running Magic Lantern firmware and an off-the-shelf video processing board with a field programmable gate array (FPGA), plus some custom software to process the video coming from the camera. It works by taking in a raw feed of alternatively under and over exposed video and storing it in a buffer, then processing the video on its way to a screen. What results is the virtually latency-free 480p resolution HDR video at 60 frames per second seen in our video after the break.

When we asked whether higher resolution and faster frame rate output is possible, we were told that the current limitations are the speed of the imaging chip on the board and the bandwidth of the memory buffer. The setup we saw utilized a relatively cheap $200 Digilent board with a Xilinx chip, but a 1080p version is in the works using a more expensive board and DDR3 memory. Of course, the current system is for research purposes only, but the technology can be applied in consumer devices -- as long as they have an FPGA and offer open source firmware. So, should the OEM's get with the program, we can have HDR moving pictures to go with our stationary ones.

Filed under: Cameras, HD

Comments

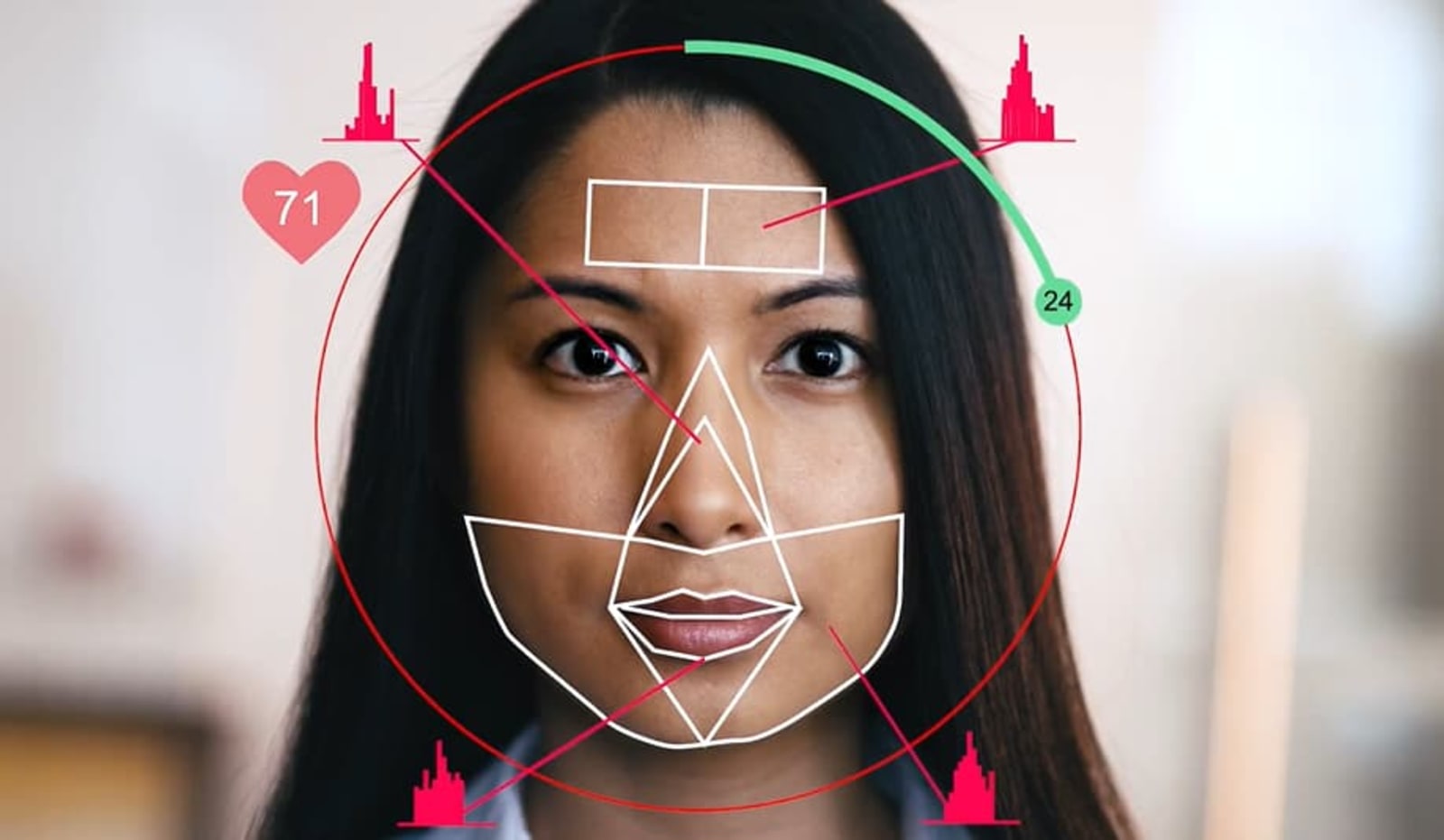

In the near future, you might not have to traipse to your doctor or pharmacy to determine your blood pressure. Researchers have figured out a way to accurately measure it with your phone's camera.

In the near future, you might not have to traipse to your doctor or pharmacy to determine your blood pressure. Researchers have figured out a way to accurately measure it with your phone's camera.

In the near future, you might not have to traipse to your doctor or pharmacy to determine your blood pressure. Researchers have figured out a way to accurately measure it with your phone's camera.

In the near future, you might not have to traipse to your doctor or pharmacy to determine your blood pressure. Researchers have figured out a way to accurately measure it with your phone's camera.

In addition to penning 37 plays, William Shakespeare was a prolific composer of sonnets -- crafting 154 of them during his life. Now, more than 400 years after his death, the Bard's words are influencing a new generation of poets. It's just that thes...

In addition to penning 37 plays, William Shakespeare was a prolific composer of sonnets -- crafting 154 of them during his life. Now, more than 400 years after his death, the Bard's words are influencing a new generation of poets. It's just that thes...

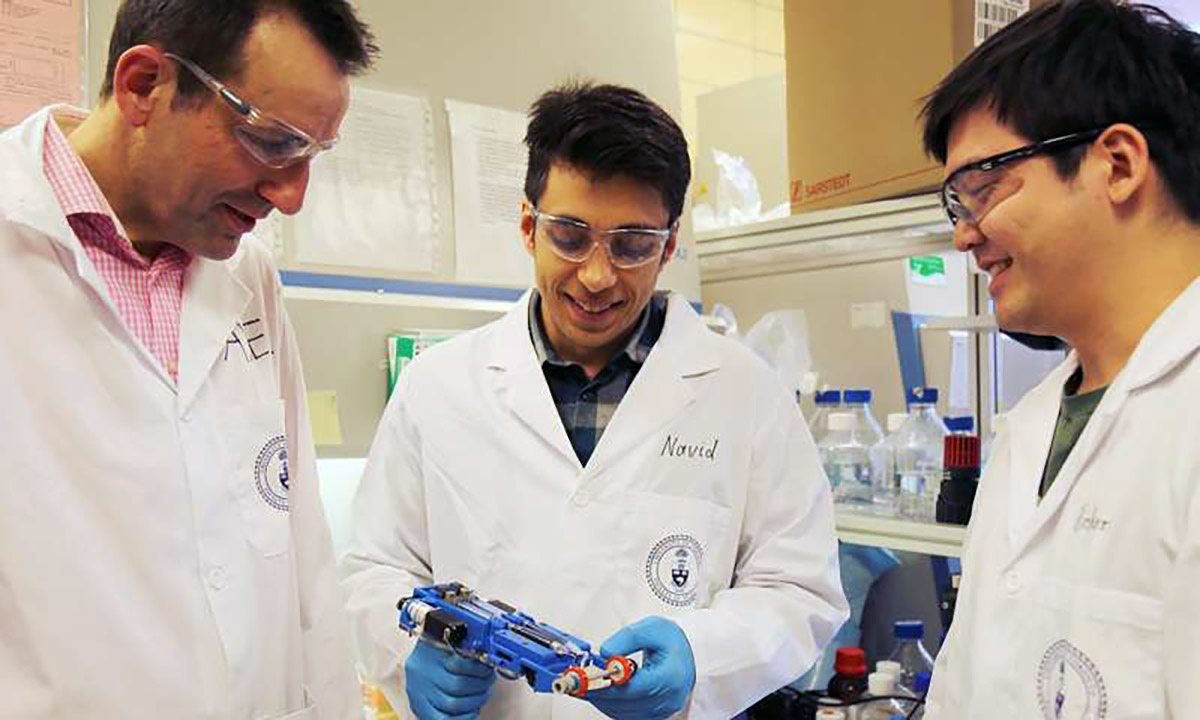

A handheld device that deposits skin directly onto patients' wounds could revolutionize the way that doctors treat burns and other wounds. The printer, developed by University of Toronto researchers, is used like a white-out tape dispenser, rolling o...

A handheld device that deposits skin directly onto patients' wounds could revolutionize the way that doctors treat burns and other wounds. The printer, developed by University of Toronto researchers, is used like a white-out tape dispenser, rolling o...

Today on In Case You Missed It: Scientists managed to turn taste on and off in mice by activating and silencing brain cells, putting to bed the notion that taste is determined by the tongue. University of Toronto cancer researchers used a patient's g...

Today on In Case You Missed It: Scientists managed to turn taste on and off in mice by activating and silencing brain cells, putting to bed the notion that taste is determined by the tongue. University of Toronto cancer researchers used a patient's g...

Modern medicine still takes a decidedly ham-fisted approach to treating cancer -- it attacks with radiation and chemotherapy drugs that are just as toxic to healthy tissues as they are to tumors. What's more the effects of these treatments vary betwe...

Modern medicine still takes a decidedly ham-fisted approach to treating cancer -- it attacks with radiation and chemotherapy drugs that are just as toxic to healthy tissues as they are to tumors. What's more the effects of these treatments vary betwe...

Back in April, South Korea required that wireless carriers install parental control apps on kids' phones to prevent young ones from seeing naughty content. It sounded wise to officials at the time, but it now looks like that cure is worse than the...

Back in April, South Korea required that wireless carriers install parental control apps on kids' phones to prevent young ones from seeing naughty content. It sounded wise to officials at the time, but it now looks like that cure is worse than the...